Community Tech/Grant metrics tool

This page documents a project the Wikimedia Foundation's Community Tech team has worked on or declined in the past. Technical work on this project is complete.

We invite you to join the discussion on the talk page.

The goal of creating this tool is to:

- Provide grantees a simple, easy to use tool for reporting their shared metrics, removing the need for any manual counting.

- Correct some of the known tool-related problems around collecting metrics, especially some of the known problems with Wikimetrics.

This tool would not be a replacement for the Program and Events Dashboard, which is primarily a program management tool. For more information about how this tool relates to other tools, please visit the #FAQ. For more background on this project, please visit #Background.

Project Status[edit]

Grant metrics tool is currently in development by the Community Tech Team. It has now been renamed to Event Metrics. For information about further project development, please go to the new project page. We are actively adding more features and tending to bugs. Please feel free to use and leave feedback. You can follow the project development and join in the discussions on phabricator.

Problems to solve[edit]

The main problems are:

- Counting things is boring, difficult and time-consuming. It's easy the book forget. It's easy to make mistakes. For example, numbers might need to be unduplicated across multiple events and this is very time intensive.

- A lot of stuff is done manually for multiple reasons, such as:

- A tool is difficult to learn or use

- No tools exists to collect a specific metric (e.g. retention), or the ones that do are broken / need improvement (e.g. Wikimetrics)

- It's difficult to correctly attribute a person's contributions to a specific program/event. For example, in Wikimetrics all the edits a person makes within the time period is counted toward the program. In the Dashboard, this attribution happens only if the program is set up as a "Visiting Scholars" program, and all target articles are entered in.

- The definition of metrics is inconsistent. For example, in Wikimetrics a "newly registered editor" is both someone who creates an username, as well as existing user who visits a project for the first time. So the definition of the metric in Wikimetrics doesn't match the definition of the metric being asked for.

- Privacy is important to organizers and participants, and is governed by various laws.

- Measuring retention is difficult. It means going into the contribution pages to check, weeks after the program is over.

Proposed solution[edit]

Computers are better than people at counting things, and they enjoy it more. The grantee should only be responsible for collecting and inputting four pieces of information into the tool: usernames, some way to identify content they're planning to create/improve, the wiki(s) involved, and the time period of the event/program (i.e. the start and end date of the event/program).

From this information, the five metrics are calculated (i.e. x editors (incl x new editors) worked on x pages over this time period). The tool produces a report which can be generated at any time. The report includes totals for a program, and then breaks the stats down by event in that program (e.g. a series of editing workshops will have multiple workshops, where each workshop is an event).

There will be a behind-the-scenes setup interface, that will be customizable by Community Resources staff. For instance, the definition of "retention" is likely to change in the medium-term -- it takes 5 edits instead of 1, or 30 days instead of 14. Any definition that involves a number should not be hard-coded.

How the tool works[edit]

#1. My Programs/Sign in[edit]

- Grant Metrics WMF title

- Text to describe the tool

- Username (logout)

- Table with Programs list

- Create Program button

#1b. Logged-out version[edit]

- Log in button goes to OAUTH

#1c. Create/Update program page[edit]

#2. Program page[edit]

- Table with list of events, stats, total row

- Link for edit, remove and duplicate event in each event row

- Create event button

- Edit program link

- List of organizers

- Return to My Programs

#2b. Create Event interface[edit]

For "all wikis", you'd leave that line blank. (Check with folks to see if that's obvious or not.)

#2c. Remove event interface[edit]

#2d. Add/remove organizers interface[edit]

#2e. Update event page[edit]

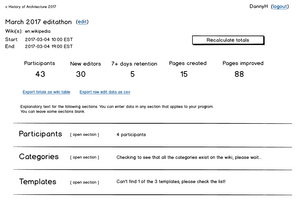

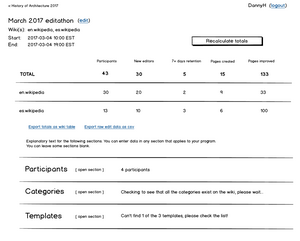

#3. Event page[edit]

-

Event page with three sections and total stats, for one Wikipedia language

-

Event page with stats broken out by wiki, for events with two Wikipedia languages

-

Event page for Commons

-

Event page for Wikidata

- Title/wiki/dates, with edit button

- Current totals

- Calculate/recalculate button

- Export: totals to wiki table, raw data to csv

- Explanation of the sections

- Return to Program page

- 3 accordion sections: Participants, Categories, Templates

For Wikidata: we need to find out what the appropriate stats are.

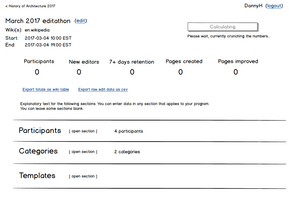

#3b. Calculating and recalculating[edit]

-

Still saving the Categories, calculate button is disabled

-

Participants and Categories are saved, calculate button is active

-

Progress message while calculating

-

After calculating the totals, there's a "last updated" message. The totals won't automatically update, hit Calculate totals again to update.

-

Added more participants, warning message reminding you to recalculate the totals

#3c. Editing title/wiki/dates interface[edit]

#3d. Export interface[edit]

#3e. Format for csv[edit]

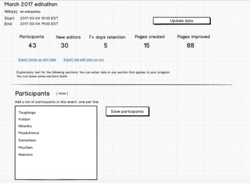

#3.1 Event page - Participants section[edit]

-

Adding list of participants in the input field

-

Click save participants. The status message says "Checking to see that all of the participants have accounts on the wiki(s), please wait..."

-

Error warning for participant that doesn't exist, with list of troubleshooting tips

-

All participants saved, status message says "8 participants"

Note: Check the list of troubleshooting tips against what the tool actually does.

#3.2 Event page - Categories section[edit]

Each category and template needs a wiki. If there's only one wiki that the event is running on, all of the categories will have that wiki set automatically. If there's more than one wiki, there will be a dropdown with the available wikis.

If an event is happening on "all wikis", then they probably won't be using either categories or templates. Is that right? Check with people who do "all wikis" events.

Note: Category depth is important -- example: commons:Category:ArtAndFeminism 2017. We have to look at all pages/files in the category, including subcategories several levels deep.

#3.3 Event page - Templates section[edit]

General feedback[edit]

Wikimedia Foundation grantees provided feedback on their general experience with collecting metrics and their impressions of drafted images of grant metrics tool. This feedback was collected from the talk page, e-mail, and from attendees at Wikimania 2017. Main highlights from this feedback include the following:

- Attributing relevant contributions to editors for an event is difficult: Respondents reported using various manual means to report this information, such as using Special:Contributions, or the Global User Contributions tool, copy-and-pasting the relevant edits in a given timeframe, and cleaning them up to identify articles and edits.

- Videos and documentation did not help people learn how to use Wikimetrics: Respondents reported using the videos and documentation associated with Wikimetrics to gather information about their events, but these resources were not helpful in actually gathering desired information from the tool.

- The use case for gathering metrics from a list of pages lacks support: Event organizers can create pages with lists of articles or other pages for event participants to consider working on. However, for various reasons, respondents reported that gathering metrics from these kinds of lists would not be helpful to them. For some, it was because maintaining and updating such pages was considered too taxing. Some also noted that focusing on an assigned list of articles was not consistent with their goals for the event; participants often bring in their own ideas for what they want to edit, and organizers themselves prefer not to assign articles to participants.

- Ability to edit a cohort is a needed feature: Respondents reported frustration that cohorts -- groups of editors to gather metrics on -- cannot be edited in Wikimetrics once they are created. It requires organizers to create multiple cohorts to gather specific kinds of metrics, resulting in lists of cohorts that are difficult to maintain over time.

- Mixed feedback on whether participant sign-up as a feature should be prioritized in development or not: Respondents were split on whether having event participants directly sign in to the interface to track their contributions directly was a primary need for the tool or not. Respondents who reported that it was not a priority noted that new editors become disoriented and confused when asked to switch from Wikimedia projects to off-wiki tools. They also reported that some editors simply elect not to sign in. Respondents who supported making this feature a priority noted that they would be more likely to use it for very large events where several hundred participants are expected, and also emphasized the importance of making the sign-in process easy.

- Categories or templates were not generally used to capture contributions outside of Commons, but... respondents expressed interest in doing so and acknowledged the usefulness of this strategy to track contributions. The ability to define category depth was discussed as a need to make category-based tracking effective.

Based on this initial feedback, Community Tech and Community Resources plan to focus on the following tasks:

Community Tech tasks[edit]

Things to change in the spec:

Take Pages out, the use case isn't strong enoughStatus messages need to be clearer, in human language (rather than "validating")."Show/hide" is confusing. Use "edit" or something similar.-- trying out "open section"Error message for invalid user should give troubleshooting adviceAdd when last calculation was done, "Recalculate totals" is grayed out, "calculation up to date"Post the roadmap when we're clear what's going to be v1 and v2(as draft, we'll need to update)Different metrics for Commons and Wikidata- Wireframes for Categories and Templates

- Make it clearer that you can use any or all of Pages/Categories/Templates

- Define what the export CSV table looks like

- Make a copy of a program -- for monthly events with the same people.

- Click on the stat (name or number) and see a list of pages created/edited?

Community Resources tasks[edit]

- Develop and test training resources to ensure they help organizers use the tool and effectively troubleshoot.

- Show organizers how to use categories/templates as a way to track contributions (for projects where this practice is consistent with community policies/guidelines)

- Establish who is responsible for maintaining the tool, how they should be contacted by users, and how/where this troubleshooting process should be detailed so it is accessible for users.

Roadmap (draft)[edit]

The development of this tool will involve releasing an initial v1 version, and then refining and adding more features in later iterations. We want the users whose needs are met with the first version to start using it, and the things that we learn from releasing the first version will help to inform the further development of the tool. We call the first version the "minimum viable product", meaning that there are some users who will be able to use the tool successfully, even without the later iterations.

Below is a list of things that may not appear in the first version, and will be added in later iterations. We'll update this list when we're further into development. If a feature you're looking forward to is on this list, it's not because we think it's unimportant.

- Other Wikimedia projects -- do Wikipedias and Commons first

- Categories/templates -- do participant list first

- Make a copy of this program/event

- Click on the stat to get a list of pages created/edited

- Mixed Latin/non-Latin script in the same event/program, and mixed LTR/RTL -- mixed may turn out not to be feasible at all, so people will have to split event stats between LTR/RTL, as two separate events

A more update to date list of features may be found on the roadmap Phabricator task.

Background[edit]

Community Resources currently asks grantees to report on shared metrics, as a part of their grant. These metrics were chosen after consultation with grantees and grant committee members last year. However, these metrics were a compromise; grantees preferred two different metrics that did not have easy-to-use tools available. The metrics were "new editor retention" and "pages created/improved, separated by Wikimedia project" (e.g. contributions to Commons is reported separately than contributions to Wikidata).

Over the last few months, Community Resources and Community Tech have been working together to design a new tool that would address these problems, as well as long-standing known problems within Wikimetrics. The specific list of problems is included in #Problems.

Older versions of this idea can be found in the archive. Earlier versions of this idea were shared with a small group of grantees at the Wikimedia Conference, to gauge if we were on the right path. Their early feedback was incorporated to create this version of the specifications.

The tool would primarily benefit grantees receiving Annual Plan Grants and Project Grants from the WMF, they are the only programs who are asked to report on grant metrics.

The first version of this tool will only support the five metrics listed above. Grantees often provide a variety of other metrics in their progress and impact reports, especially if their goal isn't specifically about participants and content pages. Once this version of the tool is up and running, it may support other metrics in the future. We're happy to talk about ideas and suggestions for future metrics to support.

Definitions[edit]

Community Resources asks APG and Project grantees for to report a few shared metrics, some of which aren't required today because of tool-related problems.

- # of participants

- # of new editors. Specifically, the number of new accounts created, including users that registered up to 14 days before the event.

- # of new editors retained. For the this metric, "retention" is defined as people who make at least one edit, in any Wikimedia project (in any namespace), between the time when the event ends and 30 days after that end date.

- # content pages created, reported by Wikimedia project. A content page is a page in either the main or file namespace.

- # content pages improved, reported by Wikimedia project. A content page is a page in either the main or file namespace.

FAQ[edit]

- This tool is basically an update to Wikimetrics. It is being updated to make it easier to gather the few grant metrics that are shared across grants, which are slightly different than the old Global Metrics. It also specifically addresses problems that users have expressed about Wikimetrics, such as the following:

- Grantees have expressed difficulty with using the Wikimetrics interface. The current tool will use an interface that is more intuitive and straightforward for grantees to use.

- Measuring new editor retention with Wikimetrics is difficult due to how new editors are defined in the tool and because it requires some additional steps, such as creating a separate list of new editors. In the current tool, a clear definition for ‘’new editors’’ and a ‘’retained new editor’’ will be set, and will allow this metric to be provided automatically.

- Attributing edits to a particular event is not possible to do in WikiMetrics. The current tool will allow users to attribute edits based on specified categories, templates, pages, and contributors.

- You will not need to specify usernames to gather metrics for an event, if you don't have them.

- This tool will differ from the dashboard in a few ways:

- This tool can only be used after the program is over, and will only focus on reporting the outputs of the program. In contrast, the Dashboard has the additional ability to track what people are doing during the event, and can also be used for program management.

- It will be at tool that focuses exclusively on tracking metrics, which might be faster for those running smaller events/programs who just want metrics but not the other features of the Dashboard.

- This tool is basically an update to Wikimetrics. It will utilize certain parts of Wikimetrics as a foundation, but include a simpler interface. It will also include new functionality, such as the ability to create Programs, which can include multiple events with duplicate/different groups of participants. In order to add this new functionality without further complicating the interface, we’re decided to build a completely new interface for the tool.

- The Dashboard serves more than just WMF grantees, and has been optimized to serve particular programs well (e.g. Education programs, which are long-term programs with a single group of students, who either choose or are assigned articles by the teacher). For those who use the Dashboard, they can continue to do so. However for those who choose not to use the Dashboard, or find it's not the right tool for their program, this tool is an alternative that will still help them get the basic metrics to fulfill their requirement to WMF.

- Yes. We will be working to ensure the tool is adequately translated as broadly as possible. When we develop the tool, all the interface messages will be translatable through Translatewiki. Volunteer translators in each language will be able to contribute translations, and users will see the interface messages in their language. More info on Translatewiki: [1]

- After we have gotten feedback from a broad set of grantees, we will be integrating that feedback into the specifications you see on the page (or changing them entirely based on what we hear). The goal is to make the tool as useful to grantees as possible. After that, we will begin developing a prototype, which we’ll test with people who are interested. If you are interested in being an alpha tester, please let us know!

- For general questions or feedback on this project, leave a comment on the talk page or contact Sati Houston or Chris Schilling.

- If you have a specific technical questions or concern, contact Danny Horn or leave a comment on the talk page.