Grants:Project/Maxlath/WikidataJS/Final

![]() This project is funded by a Project Grant

This project is funded by a Project Grant

| proposal | people | timeline & progress | finances | midpoint report | final report |

- Report under review

- To read the approved grant submission for this project, please visit Grants:Project/Maxlath/WikidataJS.

- Review the reporting requirements to better understand the reporting process.

- Review all Project Grant reports under review.

- Please Email projectgrants

wikimedia.org if you have additional questions.

wikimedia.org if you have additional questions.

Welcome to this project's final report! This report shares the outcomes, impact and learnings from the grantee's project.

Part 1: The Project[edit]

Summary[edit]

Following on what was achieved in the first part of the Grant (see Grants:Project/Maxlath/WikidataJS/Midpoint), various improvements, new features, and bug fixes were published on the WikidataJS tools, but the biggest achievement was the transition from being Wikidata centered tools to Wikibase-agnostic tools, to a point where those should now rather be called WikibaseJS tools. Improving support for non-Wikidata Wikibase instances was considered top priority given the level of attention Wikibase is getting in and out of Wikimedia (see the recently published Strategy for the Wikibase Ecosystem paper). This transition was more challenging and time consuming than expected, the coupling of WikidataJS tools with wikidata.org running deep into the code, and those tools very architecture.

Project Goals[edit]

Provide Javascript developers with well designed primitive building blocks to access Wikidata data in an easy, simplified-by-default way This approach stayed at the heart of the tools design principles, to the point where some people would be sort of "disappointed" by how primitive those can be, with the recurring example of wikidata-sdk only generating requests URLs instead of making the requests itself.

On that example, given the scattering of the JS eco-system, I remain convinced that, when possible, this is the right approach to stay relevent without having to periodically change HTTP lib, as we currently see in wikidata-edit (where not making those request was not an option). This led to some people rewrapping wikidata-sdk (ex: wikidata-sdk-got), which is totally fine. Another argument in favor of staying lean is to avoid to generated bloated applications where each lib has there own was of making certain elementary actions (and thats how we end up with that bad reputation on node_modules being somewhat heavy).

Provide the Wikidata community with quality JS and CLI tools to edit Wikidata, either manually or automatically While it's hard to access the quality of those tools, tickets being opened for undesired behaviors from the tools are rather limited. Part of the objectives have been to make known errors easier to understand for the developers: this has been done by catching errors to reword their message before rethrowing, possibly by logging the URL of a Phabricator tickets (well-known Wikibase errors) or Github tickets (well-known WikibaseJS errors).

Document the tools to help communities around other programming languages to learn from it With the transition to Wikibase-agnostic tools, keeping the documentation complete and up-to-date prooved to be challenging. Beyond the markdown documentation, there were also constant efforts to keep the code organized in a predictable way and readable. The quality of the documentation has been reported as a strong asset of those tools, and there is still some homework to do to recover this quality now that the transition is fully done. A particularly painful point there is that close to all the examples in this documentation were using P and Q ids from Wikidata, thus making sense (that is, as much sense as obscure identifiers can make sense) within the Wikidata project: the difficulty lies in that it is not clear if making Wikidata-free examples would be more helpful to a non-Wikidata users than damaging the understanding of those tools for Wikidata users, which we can assume are still the majority of the people coming to those tools.

Project Impact[edit]

Targets[edit]

| Planned measure of success (include numeric target, if applicable) |

Actual result | Explanation |

| increase manual editing of Wikidata from the command-line: +10,000 edits | couldn't be measurable precisely, but a fair amount can be found by looking at the contribution history of wikibase-cli users (example with my own contributions) | it will become measurable with the recently added support for tags on Wikidata revisions, as soon as wikibase-edit (and thus wikibase-cli) get support for it too |

| increase manual editing of Wikidata from third-party applications: +10,000 edits | idem | we can have the list of recent edits made with wikibase-edit by a known third-party application, inventaire.io, but that's just one of the known users |

| increase automated editing of Wikidata from scripts: +1,000,000 edits | idem | less happened in my own scripts but I would assume other people used it. Unfortunately, in absence of tags, I just can't identify those contributions. |

| new features & maintenance: +100 commits in total in the various tools repositories | 232 + 223 + 274 + 36 = 765 commits amoung the 4 tools repositories | Count generated the command git log --abbrev-commit --since=2018-04-01

|

| responsiveness: this grant should allow to address all the issues opened by the community, ideally within a month, and never let more than 5 opened issues/pull requests per repo | 7 + 7 + 14 + 6 = 34 issues currently opened in 4 repositories | That was a hard promise to keep in the first place. On the bright side, most of the remining opened issues are feature requests, and all critical bugs (lib crashing in what should be a valid use case) got fixed. |

| documentation: all features documented in Markdown, at least one code example per feature | it's sometimes minimal but we are close to that! some minor, self-explanatory features remain undocumented |

Story[edit]

What were originally a set of tools designed to support my sole use-cases as grown to become an important part of the Wikidata and Wikibase technical ecosystem, with many contributors inside and outside Wikimedia projects pinging and opening feature requests, pulling the tools to be extended to more diverse use-cases. The apogee of this trend was the request by the Wikibase team at the Wikimedia Hackathon in Prague to initiate the transition to Wikibase-agnostic tools, followed by discussions in Wikimania to consider the integration of wikidata-cli within wmde wikibase-docker.

Survey(s)[edit]

Other[edit]

Download counts[edit]

Download counts are interesting but, out of CLI tools, should not be considered an accurate measure of the use of a tool:

- one download of wikidata-sdk or wikidata-edit might result in many visitors, users, contributors, benefiting from the tool's possibilities

- automated tools (such as CI tools) downloads are also included

Download counts for each packages

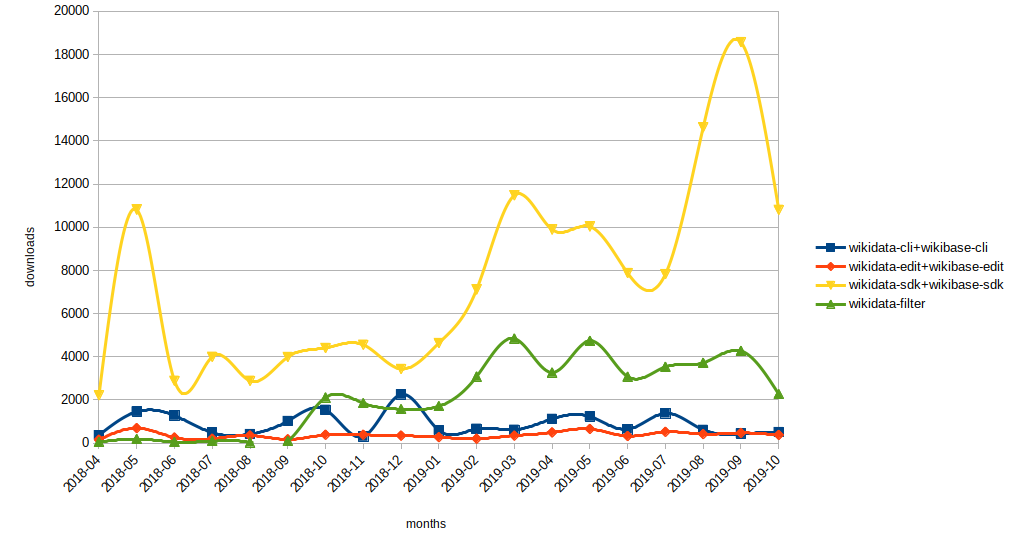

Download counts after regrouping renamed packages counts

Cumulated count for all packages

The most surprising thing I learned from those statistics is that wikidata-filter is getting much more attention than I was realizing, while it was far from getting as many commits as other packages. The reason for this amount of downloads isn't clear unfortunately: how are all those people parsing Wikidata dumps?!?

Methods and activities[edit]

Developments[edit]

An exhaustive list of tools new features can be found in projects change logs and issues, while bug fixes can be found within commit messages and issues too:

- wikibase-sdk: change logs, commits history, issues

- wikibase-edit: change logs, commits history, issues

- wikibase-cli: change logs, commits history, issues

- wikidata-filter: change logs, commits history, issues

Project resources[edit]

Development resources[edit]

- wikibase-edit

- wikidata-filter

Communication resources[edit]

Learning[edit]

The coding part didn't go without some difficulties, especially when undertaking such a deep transition from Wikidata to Wikibase-agnostisism, which took much more time than expected due to the deep coupling the tools developed to Wikidata.

But the most challenging has been getting away from code to fill reports targetting non-technical readers, given that this grant is eminently technical: having to explain why those tools are necessary in the first place, or why a certain architecture is preferable to another, filling reports questions when you have the feeling that the code documentation should have been enough if the review was being done by peers has proved to be mentally exhausting and time consuming. I'm not sure what my learning has been there, other than a re-enforced aversion for reports.

What worked well[edit]

What didn’t work[edit]

- tracking time passed on the different features was a failure: the amount of time passed designed, reflecting over an evolution, a change of architecture can span over several months, there is just no decent way to keep track of that.

Other recommendations[edit]

- Communication with users through Github issues, Twitter hashtags, and even via direct email and chat, worked quite well

Next steps and opportunities[edit]

The tools development will definitely continue! It's just not clear how much time I will be able to give it, and how the community could support it.

Part 2: The Grant[edit]

Finances[edit]

Actual spending[edit]

- development [250h]: 8100 €

- communication [10h]: 400 €

Remaining funds[edit]

Do you have any unspent funds from the grant?

Please answer yes or no. If yes, list the amount you did not use and explain why.

- no

If you have unspent funds, they must be returned to WMF. Please see the instructions for returning unspent funds and indicate here if this is still in progress, or if this is already completed:

Documentation[edit]

Did you send documentation of all expenses paid with grant funds to grantsadmin![]() wikimedia.org, according to the guidelines here?

wikimedia.org, according to the guidelines here?

Please answer yes or no. If no, include an explanation.

- yes

Confirmation of project status[edit]

Did you comply with the requirements specified by WMF in the grant agreement?

Please answer yes or no.

- yes

Is your project completed?

Please answer yes or no.

- yes

Grantee reflection[edit]

We’d love to hear any thoughts you have on what this project has meant to you, or how the experience of being a grantee has gone overall. Is there something that surprised you, or that you particularly enjoyed, or that you’ll do differently going forward as a result of the Project Grant experience? Please share it here!

It was great to get an official support from Wikimedia for this work I had been starting on my own, it meant a lot to me. For the rest, I found that the Project Grant candidating and reporting process wasn't so adapted to this kind of technical project: I feel that documentation, changelogs, commit messages, and the different statistics generated by the tools we use in this ecosystem (git forge graphs, npm download counts, etc.) should be enough, and could spare the need to give so much time to reporting. The condition for this to work would then be that those Technical Project Grant would need to be selected and reviewed by technical community peers. The Wikimedia Hackathon could then be put to use to gather the different grantees and help the community learn more about the different Technical Project Grant going on.

On the other hand, I do see the value of sharing what is happening in this space with a less technical audience, but the cost of filling reports still feels disproportionate. (I hope those statements don't come through as harsh, and should be nuanced with the fact that my own aversion for reporting might be to blame ><)

Finally, I would like to thank all the people who brought their support, be it on the Project Grant page or through Github and social networks, the grant committee and all the awesome Wikimedia people with who I had the pleasure to exchange during this grant, in particular the Wikidata and Wikibase team and community: it's just the beginning!