Research:HHVM newcomer engagement experiment

Recent work has shown that changes in performance (specifically, page load times) can have dramatic effects on user engagement[citation needed]. Recently, the platform team at the Wikimedia Foundation has experimented with deployments of HipHop Virtual Machine (HHVM), a new execution engine for PHP that promises substantial improvements in page load times. This benefit is especially noticeable in the case of logged-in editors since their page loads are generally not served by the varnish caching servers -- and therefore have to be computed upon request. Performance analysis suggests that pages generally load 50% faster.

We hypothesize that the type of performance improvement that HHVM provides will result in improved engagement of editors in Wikipedia. In this study we'll focused on new editor engagement.

Summary[edit]

Our results show no significant difference for newcomer engagement measures when HHVM was enabled. See #Discussion.

Research questions[edit]

Our primary research question is this: How does the performance improvement of HHVM affect the level of engagement for new users?

If we assume that editors have a fixed amount of time to spend contributing to Wikipedia, then we should expect that a performance improvement on the site will allow them to save more edits in the time they have.

- Hypothesis 1: Efficiency: New editors with HHVM will save more edits.

An improved user experience has the potential to increase the level of investment that editors in Wikipedia. If that's true, we should expect that editors will either edit Wikipedia for longer per session or find time to edit Wikipedia more often.

- Hypothesis 2: Investment: New editors with spend more time editing.

Experimental design[edit]

- Bucketing period -- exactly one week

-

- Start: Wed, 28 Oct 2014 01:00:00 UTC

- End: Thu, 4 Nov 2014 01:00:00 UTC (exactly one week)

- Treatment -- 50/50 HHVM/PHP5

-

- All accounts registered within the bucking period were split 50/50 PHP5 and HHVM

- Users were round-robin sampled based on user_id (evens got HHVM, odds got PHP5)

- Observation -- one week after registration

-

- All bucketed user were observed for one week

- Measures of the rate of editing and time spent editing were taken

Results[edit]

Proportion measures[edit]

Here, we see no strong effects of the treatment (HHVM). The only difference that might be significant is the rate of editor activation in the case of Desktop, but again, it's in the opposite from what we'd expect.

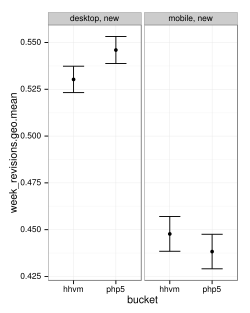

Scalar measures[edit]

Again we don't see any clear differences except for maybe a small, counter-intuitive one for the raw number of revisions saved that gets washed out when we filter for article edits and productive edits.

Discussion[edit]

How is it that a dramatic improvement to performance that substantially affects the editing workflow did not either increase the number of edits saved or reduce the time spent completing the same number of edits?

- Bug

- This hypothesis must always be entertained. It could be a code bug in the treatment code or a bug in the analysis code. Given the review of treatment code and the multiple strategies used to measure effects (some parametric, other nonparametric) this seems unlikely.

- Small effect

- The effect exists and is positive, but it is so small that it is difficult to detect beyond the noise. If the effect is this small, it's probably negligible. That's a bummer.

- Lower bound on faster == better

- It could be that faster is better, but that the effect is only up to a certain point. Edits take 1-7 minutes on average. Improving save time by a few seconds may not affect the pattern that much. However, I suspect that if saving was 5-10 seconds slower, we'd see a loss. There also might be steps worth considering. Recent work in the rhythms of human behavior in online systems suggest that activities tend to clusters at certain time intervals[1]. Operations exist at 3-15 seconds. Actions exist at 1-7 minutes. Activity sessions occur between 1 day and 1 week apart.

- Applying this to Wikipedia, it is common to set aside time on a regular basis to spend doing “wiki-work”. Activity Theory would conceptualize this wiki-work overall as an activity and each unit of time spent engaging in the wiki-work as an “activity session”. The actions within an activity session would manifest as individual edits to wiki pages representing contributions to encyclopedia articles, posts in discussions and messages sent to other Wikipedia editors. These edits involve a varied set of operations: typing of characters, copy-pasting the details of reference materials, scrolling through a document, reading an argument and eventually, clicking the “Save” button.

- This could imply that speeding up "operations" may have more of a substantial affect than speeding up the last step in an "action". So we might expect to see more dramatic improvements by making it faster to perform sub-edit actions like looking up references & formatting or previewing the current edit.

References[edit]

- ↑ Halfaker, A., Keyes, O., Kluver, D., Thebault-Spieker, J., Nguyen, T., Shores, K., ... & Warncke-Wang, M. (2014). User Session Identification Based on Strong Regularities in Inter-activity Time. arXiv preprint. arXiv:1411.2878