Research:Evaluating list building tools for ad-hoc topic models

This project captures different efforts for language-agnostic ad-hoc topic modeling. This tries to solve the following problem: for a custom topic, we want to find relevant and/or missing articles in a Wikipedia project. We want to do this in a language-agnostic way in order to support all Wikipedia projects.

Motivation[edit]

Topic classification assigns each article to one or more topics from a set of predefined topics, such as Culture, STEM, or History and Society. This is the approach started by the ORES topic model which was subsequently refined in several iterations for Language-agnostic topic classification. This type of grouping content is useful when trying to assess, for example, what types of content are attracting the interest of readers and editors across languages beyond the level of individual pages (e.g. via pageviews tool).

However, the topic-classification approach is not suitable when one is interested in topics not contained in the set of predefined topics. The reason is that this set of topics is typically quite small -- the currently used taxonomy of topics contains 64 topics on the most fine-grained level.

For example, editing campaigns such as The AfroCine Project often revolve around topics that are much more narrow in focus which will not be covered by those 64 topics. Therefore, we would like to develop an ad-hoc topic model, in which we identify relevant articles to any custom topic defined by the user. Keeping in mind the use-case of campaigns, ideally, this model would be language-agnostic, that is it would work across all languages in Wikimedia projects without the need for fine-tuning or language-specific parsing.

Method[edit]

We built a tool that generates lists of articles related to a topic:

- We define a topic such as African Cinema. In practice, we define the topic through a seed-article (enwiki: African Cinema); or more precisely, the corresponding Wikidata-item (Q387670).

- The models then yield a list of k articles that are most related to the defined topic. In practice, the methods yield the most related Wikidata-items with an article in some language, thus it might yield items which do not have an article in that language yet. A suitable value of k depends on the use-case, however, for manual evaluation of these lists k=100 should suffice.

- The different models capture different aspects of similarity to the topic

- Readers: captures articles that are often read in conjunction with the article belonging to the topic

- Content: captures articles that are linked in conjunction with the article belonging to the topic

- Wikidata: captures articles that are linked in the Wikidata-knowledge graph via a set of pre-defined properties

Example[edit]

The seed-topic is Cinema of Africa (Q387670) and we query k=10 related concepts for English Wikipedia using this query. This tool surfaces three different lists of articles. Manual inspection of the articles reveals that all articles are related to African cinema; they can be about film festivals, directors, movies, etc associated with countries in Africa. We can already make some observations: The list of articles is different depending on which model we choose It is not a-priori clear that one list is “better”; they provide different aspects Some articles are missing. These are articles that are missing in a given WIkipedia (here English Wikipedia) but exist in another language-version and have been deemed relevant to the topic there; thus the tool also highlights missing articles about topics in a given language. In our case, one of the missing articles is about “cinema of Mauretania” (Q2973194), which at the moment of this writing has only articles in French and Arabic Wikipedia.

Evaluation[edit]

We would like to quantitatively assess how well the different methods can create lists of articles related to a given topic.

Data[edit]

For evaluation of the list-building tools we use Wikipedia’s WikiProjects. WikiProjects are groups of editors who focus on specific topics, such as Music, Mathematics, or Medicine. The editors manually tag relevant articles in a Wikipedia if they think they are relevant to the respective project (an article can be tagged with different WikiProjects-tags). For the English Wikipedia there are over 2000 WikiProjects. Almost all articles on English Wikipedia have been tagged by at least one WikiProject and many articles are relevant to multiple WikiProjects. The WikiProjects thus provide an annotated list of articles for a variety of topics. We can automatically extract all WikiProjects labels for an article using the PageAssessments extension. This yields the following data Name of the WikiProject List of articles. This can range from a few (e.g. Usability) to several hundred or thousands (e.g. Biography has more than 1.8M articles) Each article contains an importance-rating with respect to the WikiProject (“top”, “high”, “middle”, “low”, or missing)

We have WikiProjects for three different Wikipedias: English, French, and Arabic. We apply the following filters: Remove all articles for a WikiProject that are missing an importance rating. Only keep WikiProjects with at least 100 articles and at least 1 article in the top-importance rating

- English Wikipedia: 1486 WPs

- French Wikipedia: 693 WPs

- Arabic Wikipedia: 81 WPs

Results[edit]

We evaluate the list building tools using the WikiProjects in the following:

- for each WikiProject, we select one of the articles considered Top-priority as the seed-article to define the topic by looking up the corresponding Wikidata item

- For each list-building tool, we get 100 most-related articles (sometimes the tool yields less than 100 articles for a given topic)

- In addition to the 3 language-agnostic methods described above (readers, content, and wikidata), we also consider as a baseline the most-related articles retrieved from search via morelike. We note that this is a language-specific method based on keywords.

In the following we address some of the basic questions around these lists.

How similar are the different lists?[edit]

First, we assess the size of the different lists (diagonal entries). morelike, readers, and content yield on average 100 articles (from 100 possible articles); with the reader-list having an average slightly below 100. This is most likely due to the fact that some topics (their seed-concepts) are not covered in this approach and thus the returned list is emtpy. However, this suggests that this is rare. In contrast, the Wikidata-approach yields on average only between 20-40 articles.

Second, we look how similar the different lists are by counting the number of common articles between two lists (off-diagonal entries). Surprisingly, on average there are not more than 10 shared articles across all comparisons (remember there are around 100 articles in each list). From this we conclude that the lists yield very different set of articles related to a topic.

How well do the different approaches work?[edit]

How difficult is it to find articles that are relevant to the given WikiProject? How well do the different list-building methods perform in this task. We compare the different list-building methods by checking how many of the retrieved articles for a given topic are actually contained in the corresponding WikiProject.

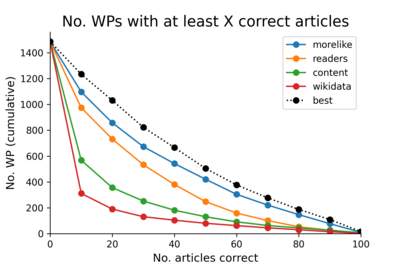

For a given topic defined by the WikiProject, we count the number of articles retrieved from each list that is actually contained in the WikiProject; if the method works well the number is closer to 100, and if the method does not work well, the number is closer to 0. Aggregating all the WikiProjects, we show the number of WikiProjects for which a given list-building method can generate at least X articles that are contained in the WikiProject. We see that, in many cases, morelike yields a large number of articles that are in the WP (e.g. for almost 900 WPs it finds at least 20 relevant articles). The reader-method is almost at the same level of performance (e.g. for almost 800 WPs it finds at least 20 relevant articles). Content- and wikidata-method yield a much lower number of relevant articles for the WPs. In general, the problem of identifying a large number of relevant articles is not trivial. Only for a small number of WPs, we can find more than 80 relevant articles.

One way to improve the overall performance, is to combine the different methods as they generate lists that differ in terms of which articles they contain. Thus, picking the best-performing method for each WP, we can substantially increase the number of relevant articles for each WP (the dashed line is shifted upwards quite a bit).

This shows, that picking from a variety of different list-building methods can increase the set of relevant articles we are able to identify.

How much improvement between the different methods[edit]

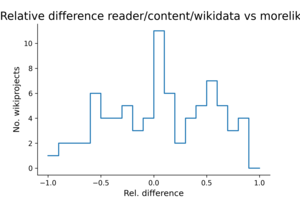

Here, we want to assess how much improvement we get from choosing one method over another; specifically, when choosing the language-specific morelike or one of the language-agnostic methods (readers/content/wikidata).

For this, for each WP, we calculate the relative difference X of the two approaches (morelike and language-agnostic). if N1 is the number of relevant articles for morelike and N2 is the number of relevant articles for the best-performing language-agnostic method, the relative difference X = (N2-N1)/max(N1,N2). Thus, X will be between -1 and 1 and we can assess whether morelike is better (X<0) or language-agnostic is better (X>0), or the two approaches are similar (X=0). In addition, the magnitude of X gives us a sense how much the two approaches differ. For example, X=0.5 means that morelike yields only have as many relevant articles as language-agnostic (the latter thus providing a 100% improvement).

We see there is a large peak at X=0 (and slightly negative values), suggesting that for many WPs the two different approaches yield a similar number of relevant articles.

However, there is another large peak at X=0.5 and a substantial mass towards X=1.0. This part of the histogram translates into several hundreds of WPs for which the language-agnostic approach provides a 100% (or more) improvement over the language-specific morelike approach.

Results beyond English[edit]

French Wikipedia[edit]

In French Wikipedia, the lists generated by morelike capture more articles from the WikiProjects than any of the other methods. The lists from the alternative methods do not provide much additional gain.

Arabic Wikipedia[edit]

In Arabic Wikipedia, the lists generated from reader interests capture more articles from the WikiProjects (or similar compared to morelike). The alternative methods yields a substantial gain compared to morelike.

This suggests that for smaller Wikipedias, such as arwiki, the lists generated from reader interest work exceptionally well in capturing articles related to specific topics.