Research:Identification of Unsourced Statements/Labeling Pilot/Results

We report the results of the Wikilabels campaign, after around one month activity. The campaign started on August 17th,2018. We advertised the campaign across multiple channels: social media, village pump on enwiki, the "Bar" on itwiki, and "le Bistro" on frwiki, as well as various mailing lists, both language specific (wikiit-l) and topic specific (e.g. wikicite-discuss). We collected a total of 1174 labels, from more than 60 participants across 3 languages. Due to an error in our system, we could collect full data for 668 labels only. We would like to thank all the editors who contributed to this experiment!

Analysis of the Contributions

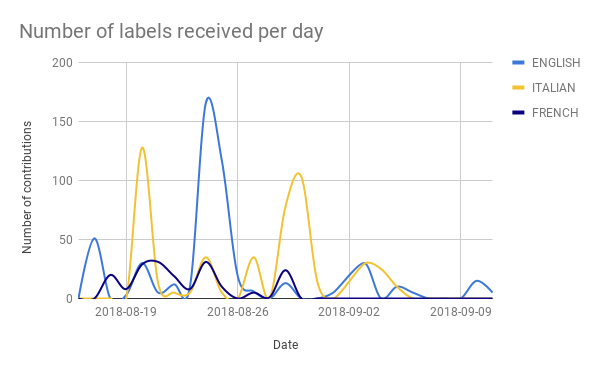

[edit]Below the distribution of labels received every day for the three languages. There was a very prompt response to our announcements on Aug 17th and 20th! Other peaks correspond to capaign announcement follow-ups distributed over time.

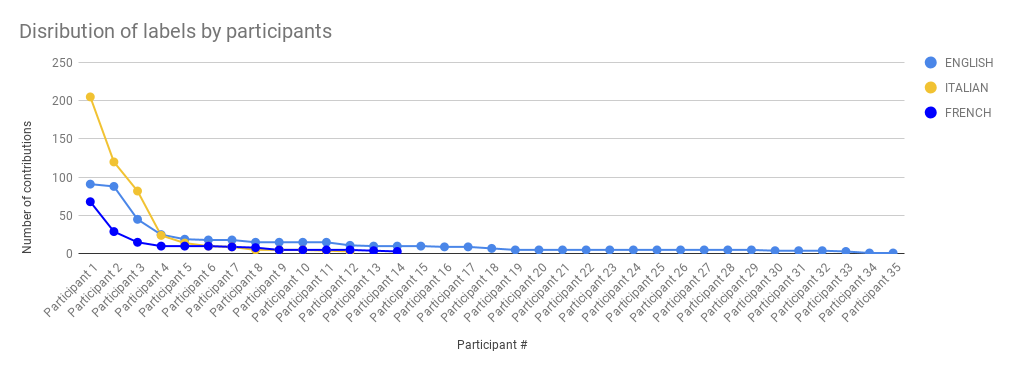

We also looked at the distribution of participants per language. Overall, editors from English Wikipedia gave the largest contribution in terms of labels: 35 editors contributed 502 labels, followed by Wikipedia in Italiano, with 12 editors for 486 labels, and Wikipédia en français, with 14 editors for 186 labels.

Analysis of the Results

[edit]We breakdown the analysis of the results into two main questions:

- Given sentences from a Featured Article, do individual editors recognize sentences with citations?

- What are the most prominent reasons why editors would add citations to sentences?

Recognizing sentences in need of a citation

[edit]In our experiment, we took sentences from featured articles, i.e. sentences whose verifiability has been evaluated multiple times by the respective communities. We then removed the citation marks, and let the editors guess whether the sentence would need a citation or not. Results show that editors tend to be conservative, and mark most sentences as [citation needed]. More than 90% of the times, editors were able to correctly recognize sentences in need of a citation. However, about 50% of the times, editors of English Wikipedia would incorrectly label as "positives" sentences not needing a citation. This percentage grows for non-english Wikpedias. In the tables below "FALSE"--> sentences not needing a citation; "TRUE"--> sentences needing a citation; "real"--> whether it has a citation in the featured article; "labeled"--> as labeled from Wikilabels contributors. Results are based on the full set of 1174 labels collected.

ENGLISH

labeled FALSE TRUE real FALSE 44.5% 55.5% TRUE 4.4% 95.6%

FRENCH

labeled FALSE TRUE real FALSE 24.7% 75.3% TRUE 7.9% 92.1%

ITALIAN

labeled FALSE TRUE real FALSE 11.1% 88.9% TRUE 10.5% 89.5%

Labeling reasons why sentences need a citation

[edit]We analyze here the responses for the second question of the task. Due to an initial error we have collected only 668 responses for this second part of the task. In general, we can see that our taxonomy was very successful. A very tiny percentage of participants selected "other" as reasons why sentences need a citation. Moreover, the option "common knowledge" did not appear in the dropdown menu among reasons of not adding a citation, although we had it in our initial taxonomy (another bug!). However, most of the users that selected the "other" option, specified in the comment field that their choice of "other" meant "common knowledge".

In general, we find that half of the sentences are labeled as needing a citation because they are "historical facts", "direct quotations", or scientific facts. A reminder that these are senteces randomly sampled from all featured articles in different languages. This might tell us that a large part of the verifiable encyclopedic knowledge is related to these categories. In English and French Wikipedia, if the sentence does not need a citation, likely it is because it lies in the lead section. However, due to the small number of "negative" samples - i.e. sentences not needing a citation, we might not want to generalize these findings.