Система повідомлень про інциденти/Оновлення

Ми перейменували Систему конфіденційного повідомлення про інциденти на Систему повідомлень про інциденти (Incident Reporting System). Слово «конфіденційний» було прибрано з назви. В контексті переслідувань і УКП слово «конфіденційний» стосується поваги до приватності членів спільноти й забезпечення їхньої безпеки. Воно не означає, що усі стадії подання повідомлення будуть конфіденційними. Ми отримали відгуки про те, що використання цього слова в назві спантеличує і може бути складним для перекладу, тому ми його й прибрали. |

Оновлення

Test the Incident Reporting System Minimum Testable Product in Beta – November 10, 2023

Editors are invited to test an initial Minimum Testable Product (MTP) for the Incident Reporting System.

The Trust & Safety Product team has created a basic product version enabling a user to file a report from the talk page where an incident occurs.

Note: This product version is for learning about filing reports to a private email address (e.g., emergency![]() wikimedia.org or an Admin group). This doesn't cover all scenarios, like reporting to a public noticeboard.

wikimedia.org or an Admin group). This doesn't cover all scenarios, like reporting to a public noticeboard.

Your feedback is needed to determine if this starting approach is effective.

To test:

1. Visit any talk namespace page on Wikipedia in Beta that contains discussions. We have sample talk pages available at User talk:Testing and Talk:African Wild Dog you can use and log in.

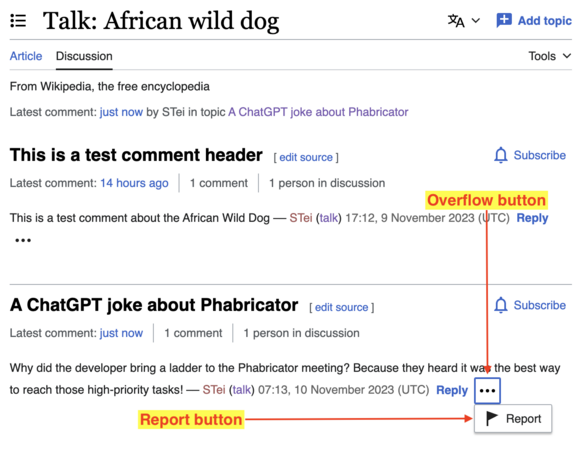

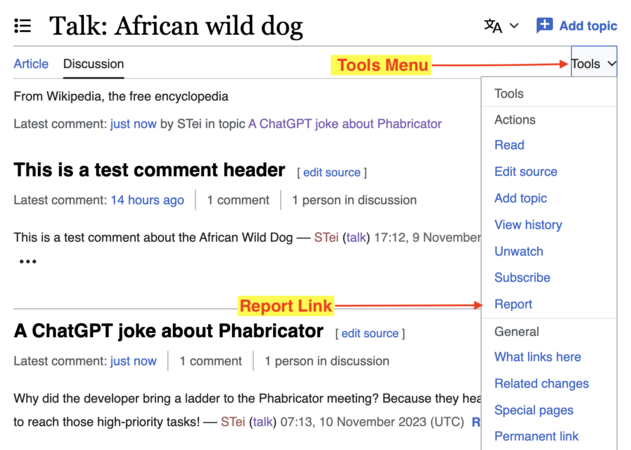

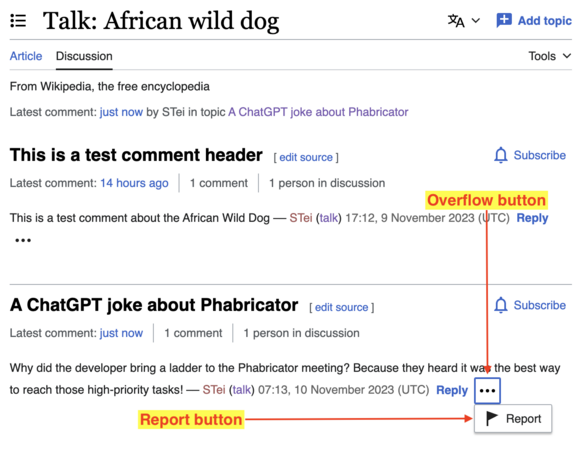

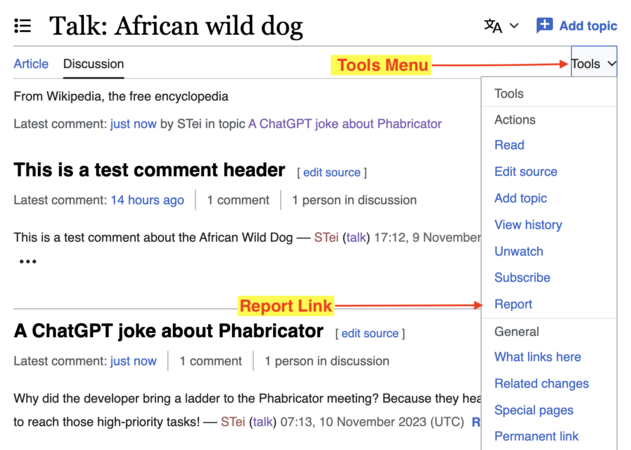

2. Next, click on the overflow button (vertical ellipsis) near the Reply link of any comment to open the overflow menu and click Report (see slide 1). You can also use the Report link in the Tools menu (see slide 2).

-

Slide 1

Slide 1 -

Slide 2

Slide 2

3. Proceed to file a report, fill the form and submit. An email will be sent to the Trust and Safety Product team, who will be the only ones to see your report. Please note this is a test and so do not use it to report real incidents.

4. As you test, ponder these questions:

- What do you think about this reporting process? Especially what you like/don’t like about it?

- If you are familiar with extensions, how would you feel about having this on your wiki as an extension?

- Which issues have we missed at this initial reporting stage?

5. Following your test, please leave your feedback on the talk page.

Troubleshooting If you can't find the overflow menu or Report links, or the form fails to submit, please ensure that:

If DiscussionTools doesn’t load, a report can be filed from the Tools menu. If you can't file a second report, please note that there is a limit of 1 report per day for non-confirmed users and 5 reports per day for autoconfirmed users. These requirements before testing help to reduce the possibility of malicious users abusing the system. |

Update: Sharing incident reporting research findings – September 20, 2023

The Incident Reporting System project has completed research about harassment on selected pilot wikis.

The research, which started in early 2023, studied the Indonesian and Korean Wikipedias to understand harassment, how harassment is reported and how responders to reports go about their work.

The findings of the studies have been published.

Чотири оновлення проєкту «Повідомлення про інциденти» – 27 липня 2023

Hello everyone! For the past couple of months the Trust and Safety Product team has been working on finalising Phase 1 of the Incident Reporting System project.

The purpose of this phase was to define possible product direction and scope of the project with your feedback. We now have a better understanding of what to do next.

1. We are renaming the project as Incident Reporting System

The project is now known as the Incident Reporting System, with the word "Private" removed.

In the context of harassment and the UCoC the word “Private” refers to respecting community members’ privacy and ensuring their safety. It does not mean that all phases of reporting will be confidential.

We have received feedback that this term is confusing and can be difficult to translate in other languages. Therefore we are removing it.

2. We have some feedback from researching some pilot communities

We are conducting research on harassment in the Indonesian and Korean Wikipedia communities. With their feedback, we have been able to document how users in these communities report harassment and created maps out of the information. These maps represent, to the best of our knowledge, how community members on both wikis currently report incidents of harassment and abuse.

-

How Korean Wikipedia reports harassment

-

How Indonesian Wikipedia reports harassment

If you have any feedback on these maps, you can give it on the talkpage.

3. We have updated the project’s overview

What we want to build moving forward

- The Trust & Safety Tools team will be developing an extension for reporting incidents/UCoC violations.

- The extension is intended to be configurable, communities should be able to adapt it to their local processes

- The extension name is ReportIncident

- The purpose of the extension is to:

- Facilitate the filing of reports about various types of UCoC violations by Wikimedians

- Route those reports to the appropriate entities that will need to process them

- Facilitate the filing of reliable reports and filter out/redirect the unactionable ones.

- Facilitate the filing of both private (e.g. to an email address) as well as public (e.g. on-wiki to an Admin noticeboard) reports according to local processes.

- Extension is intended to be incident agnostic (ability to support the reporting of different types of incidents)

What we won’t be doing

- The system is intended for reporting and routing only, we will not be dealing with processing reports

- The system is intended for incidents with regards to UCoC violations. We will not use this for other type of requests (such as technical support requests, account access etc)

- The system is NOT meant to replace existing processes on wikis. Our purpose is to make it easier to follow existing processes.

4. We have the first iteration of the reporting extension ReportIncident

This is just an initial iteration with very minimal basic features, to get us started. This is not a finalized product. |

In November last year we talked about how we should start small with a very limited scope, so for our first iteration we thought about creating a very basic experience.

What’s included in this initial iteration?

- Ability to report from User Talk page

- Report a topic header

- Report a comment

- Ability to complete a basic form and submit

- The report will be sent to an email address (a dummy email for testing purposes).

Designs

The first version of the MTP (minimum testable product) will let a Wikimedian report an abusive topic header or comment on a talk page. Here are the designs.

- On Mobile

- On Desktop

Implementing Designs – What’s next

The Trust and Safety Product team is now working on developing these initial designs as an MTP, a proof of concept that will be deployed to Beta-cluster and tested internally. The purpose of this is to assess technical viability. If everything goes well the next step is to deploy to test.wikimedia.org for usability testing and feedback.

Looking forward to your feedback about this first iteration on the talk page!

8 листопада 2022

Our main goal for the past couple of months was to understand the problem space and understand what people are struggling with, what they need, and their expectations around this project. We did this by:

- Reviewing and synthesizing harassment research, surveys and other relevant documentation (going back to 2013)

- Having user interviews with volunteers who have experienced or witnessed harassment on Wikipedia

- Having discussions with Staff members, UCoC drafting committee and wiki functionaries.

Our purpose was to identify priorities, scope and a possible product direction.

Findings and next steps

Focus on Safety

The recommendation from the Movement Strategy discussions is to provide for safety and inclusion within the communities. As our ultimate goal is for people to feel safe when participating in Wikimedia projects, we will use this as the guiding principle for what to focus on in the minimum viable product (MVP).

Project Approach: start small

There are a lot of things to take in consideration when thinking about this project.

- Many types of Users: reporter, responder, observer, accused, monitor

- Many Use cases: doxing, abuse of power, content violations, security breaches, legal issues etc.

- A lot of Complexities: admins as harassers, off wiki harassment, government interference etc.

This project will grow and become more complex over time. So we need to start really small, with a very limited scope before we dive into anything more complex.

Focus on two types of users

We have identified a few different types of users:

- Reporters: Users who have experienced harassment, and are filing a report.

- Responders: Users who receive the report, and want to help.

- Accused: The users who are named in the report.

- Monitor: People who are interested in tracking the progress of reports, to understand the problem better or to ensure that people are treated properly.

Since we want to start small, we will focus on reporters and responders first.

MVP Approach (Short-term)

The way we would like to approach this is to build something small that will help us figure out whether the basic experience actually works.

Principles of the MVP:

- We will design for and test and release on a few pilot wikis

- Since our goal is to address safety we are going to focus only on 3.1 (Harassment) in the UCoC.

- We will explore a basic experience for two user groups only:

- Reporters will understand how to file a report, and feel comfortable enough to complete the report process.

- Responders will receive clear reports, giving them the information that they need in order to understand the problem.

- MVP will connect to current systems as they are (we are not changing any existing processes)

This experiment should also help us explore and answer some important questions and learn things as we go:

- Entry points (where reporting starts) – what are they, should we have one or more?

- Users – do people easily discover the entry point? What do they think will happen when they engage it?

- Scale – can we do this at scale? Will we overwhelm the responders? etc.

- Data – can we build something that will help us collect the data we need in order to make decisions? What can we measure to know we’re moving in the right direction?

What we are not doing (yet)

The idea is to start with a really small scope, try a few things and learn as we go. Therefore we need to be very clear about what we are not going to do yet:

- We are not solving for bad admins and/or other complex use cases

- We are not fixing existing flawed processes

- Not everything in the UCoC is about safety but we are focusing only on safety

- Agnostic reporting – we cannot do this without validating a basic reporting experience works with a specific type of incident

What happens after the MVP (long-term)

We have some ideas about v2 and v3 but we want to experiment with an MVP first and see how people feel about it. What we learn now will be useful to make decisions about future versions.

Some v2 and v3 ideas include:

- Private reporting (creating a private space for reporters and responders to interact)

- Escalation (having the ability to route cases to a different entity for further support)

In order to explore these two ideas we need to ensure the basic/core experience actually works. If it does we will build on top of it.

Discussions points

- What do you think about this approach?

- What scares/concerns you about this project?

Looking forward to your feedback on the talk page!

Update – September 30, 2022

We have been collecting feedback, reading through existing documentation and conducting interviews in order to better understand the problem space and identify critical questions we need to answer. We are currently synthesising the information we have collected in an effort to start defining a more clear scope for the project. It is a lot of information to go through so this might take a while, there's so many things we need to learn!