Research:Improving link coverage

We have turned this research into a tool [dead link] for adding missing links to Wikipedia. — Check it out!

This page contains a summary of this research. To read a more detailed version of the work, please read the peer-reviewed version at "Improving Website Hyperlink Structure Using Server Logs".

Introduction[edit]

Good websites should be easy to navigate via hyperlinks, yet maintaining a link structure of high quality is difficult. Identifying pairs of pages that should be linked may be hard for human editors, especially if the site is large and changes are frequent. Further, given a set of useful link candidates, the task of incorporating them into the site can be expensive, since it typically involves humans editing pages. In the light of these challenges, it is desirable to develop data-driven methods for partly automating the link placement task.

Here we develop an approach for automatically finding useful hyperlinks to add to a website. We show that passively collected server logs, beyond telling us which existing links are useful, also contain implicit signals indicating which nonexistent links would be useful if they were to be introduced. We leverage these signals to model the future usefulness of as yet nonexistent links. Based on our model, we define the problem of link placement under budget constraints and propose an efficient algorithm for solving it. We demonstrate the effectiveness of our approach by evaluating it on Wikipedia.

Motivation[edit]

- Wikipedia links are important for presenting concepts in their appropriate context, for letting users find the information they are looking for, and for providing an element of serendipity by giving users the chance to explore new topics they happen to come across without intentionally searching for them.

- Wikipedia changes a lot (e.g., about 7,000 new articles are created every day [1]), and keeping the link structure up to date requires more work than there are editors to do it.

- So we desire automatic methods to suggest useful new links, such that editors merely have to accept or reject suggestions, rather than find the links from scratch. (A human in the loop is still useful in order to ensure that the added links are indeed of high quality.)

- Automated methods can make an arbitrary number of suggestions, which may overwhelm the editors in charge of approving the suggestions. Therefore a link suggestion algorithm should carefully select a link set of limited size.

Contributions[edit]

- We propose methods for predicting the probability that a link will be clicked by users visiting (its so-called clickthrough rate) before the link is added to Wikipedia. Based on the predicted clickthrough rates, all links that don't exist yet in Wikipedia (the so-called link candidates) can be ranked by usefulness.

- To solve the task of selecting a link set of limited size (in order not to overwhelm the human editors in charge of approving the algorithm's suggestions; cf. above), we define the problem of link placement under budget constraints, propose objective functions for formalizing the problem, and design an optimal algorithm for solving it efficiently.

Methodology: Clickthrough-rate prediction[edit]

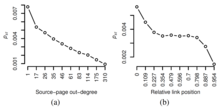

- Wikipedia links added by human editors are used very rarely on average (Fig. 1).

- That is, simply mimicking human editors would typically not result in useful link suggestions.

- Instead, we propose a data-driven approach and use the following proxies for predicting the usefulness of a new link from to :

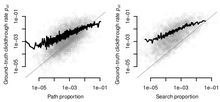

- Path proportion: If users who visit often navigate to on indirect paths, then this indicates a need to visit that could be more easily satisfied by a direct link from to . Hence, we use the fraction of visitors of who continue to navigate to (the so-called path proportion) as a predictor of the clickthrough rate of the link .

- Search proportion: Similarly, if many users use the Wikipedia search box to search for while visiting , then this indicates a need to learn about that could be more easily met by a direct link from to . Therefore, we use the fraction of visitors of who continue to perform a keyword search for (the so-called search proportion) as a predictor of the clickthrough rate of the link .

Methodology: Link placement under budget constraints[edit]

- We find empirically that adding more links out of the same source page does not increase the number of links clicked by users visiting by much.

- It is therefore desirable to not place too many links into the same source page ; instead, we should spread the links suggested for addition across many source pages.

- We formalize this desideratum in several objective functions and provide a simple greedy algorithm for finding the optimal set of links, given a user-specified 'link budget' .

Data[edit]

- We use Wikimedia's server logs, where all HTTP requests to Wikimedia projects are logged.

- We currently use only requests to the desktop version of the English Wikipedia, but our method generalizes to any language edition.

- From the raw logs, we extract entire navigation traces by stringing together the URL of the requested page with the referer URL (more info on how we do it here).

Note: We discussed the possibility of releasing the data-set used in this research here.

Evaluation[edit]

- Path proportion and search proportion (see above) are good predictors of clickthrough rate (Fig. 2).

- Links with high predicted clickthrough rates are very likely to be added by human editors after prediction time, independently of our predictions (Fig. 3).

- Top 10 suggestions of our budget-constrained link placement algorithm: cf. Fig. 4.

Research Terms[edit]

This formal research collaboration is based on a mutual agreement between the collaborators to respect Wikimedia user privacy and focus on research that can benefit the community of Wikimedia researchers, volunteers, and the WMF. To this end, the researchers who work with the private data have entered in a non-disclosure agreement as well as a memorandum of understanding.

References[edit]

- ↑ Ashwin Paranjape, Robert West, Leila Zia, and Jure Leskovec (2016): Improving Website Hyperlink Structure Using Server Logs. Proceedings of the 9th International ACM Conference on Web Search and Data Mining (WSDM), San Francisco, Calif., doi:10.1145/2835776.2835832.