Talk:Community health initiative/Blocking tools and improvements

Add topicArchives: 1

What this discussion is about

[edit]The Wikimedia Foundation's Anti-Harassment Tools team is identifying shortcomings in MediaWiki’s current blocking functionality in order to determine which blocking tools we can build for wiki communities to minimize disruption, keep bad actors off their wikis, and mediate situations where entire site blocks are not appropriate.

This discussion will help us prioritize which new blocking tools or improvements to existing tools our software developers will build in early 2018.

In early February we will want to narrow the list of suggestions to a few of the most promising ideas to pursue. We will ask for more feedback on a short list of ideas our software development team and Legal department think are the most plausible.

Thank you! — Trevor Bolliger, WMF Product Manager 🗨 00:45, 20 January 2018 (UTC)

Problem 1. Username or IP address blocks are easy to evade by sophisticated users

[edit]| Previous discussions |

|---|

- EFF panopticlick has shown it is possible to identify a single user in many cases through some surveillance techniques. Maybe it is something worth investigating ? Comte0 (talk) 21:16, 8 December 2017 (UTC)

- This is definitely something worth investigating, thanks for sharing! I'm not sure we'll be able to capture all of this data given our Privacy Policy, but we should definitely look into it. — Trevor Bolliger, WMF Product Manager 🗨 22:37, 8 December 2017 (UTC)

- This makes sense 4so I'd suggest that administrators could have check-user permissions to quickly check the user's data and understand if the blocked one tries to avoid the block. --Goo3 (talk) 08:15, 14 December 2017 (UTC)

- Yes, anything that is as sensitive as IP information will need to be permissioned to CheckUser levels. We'll work closely with the WMF's Legal department to make sure we're not violating our privacy policy or terms of use. — Trevor Bolliger, WMF Product Manager 🗨 22:30, 15 December 2017 (UTC)

- Panopticlick was my suggestion too. I wonder if providing checkusers with some kind of a score comparing 2 browsers instead of actually showing them the underlying information - IPs, fonts, headers etc.- would be acceptable under the privacy policy (something like "User A connecting with UA X has a probability of 90.7% of being the same as User B connecting with UA Y"). This would allow even less-technical people to use the tools.--Strainu (talk) 00:29, 16 December 2017 (UTC)

- I have always longed for an AI tool that can compare editing pattern and language, to detect possible match between accounts, specially blocked vandals and new accounts/Ip that pops up.Yger (talk) 08:59, 18 December 2017 (UTC)

- My two key priorities are:

- Enabling us to track a user when they change an IP address. I am not sure we should really block by user agent (say, blocking the latest version of Chrome on Android in a popular mobile range would probably not be a good idea) but blocking by device IDs (great if possible) or blocking by cookie look like good ideas.

- Proactive block of open proxies. We have no established cross-wiki list of open proxies. Each wiki has its own framework, either with administrators blocking manually or a bot checking some list. It would be very useful to have a global setup for this — NickK (talk) 16:35, 18 December 2017 (UTC)

- Blocking by user agent in some small range would be good, but can make too much collateral damage if blocked only by user agent in all IP's. Block by device ID sounds like a good idea. Cookie blocking for anons would stop many vandals, so I support it. Also a big support for Proactive globally block open proxies. Stryn (talk) 17:25, 18 December 2017 (UTC)

- Regarding the second point: If it can be done in a way that does not violate our privacy policy, the proposal to Block by device ID (including CheckUser search) sounds like a great solution. We do waste a lot of time blocking obvious puppets of vandals. Is it technically possible to get a unique ID from each machine? if so after a preset number of blocks from a single device, the system could automatically prevent IP edits as a first step and after another preset level require email confirmation for new username registrations from the device. It would serve as a deterrent for the vandals but still allow users with constructive intentions at shared machines (like public schools etc.) to register and contribute using their accounts.--Crystallizedcarbon (talk) 18:55, 19 December 2017 (UTC)

- @NickK: @Stryn: @Crystallizedcarbon: Thank you all for sharing your thoughts! I've looked into ProcseeBot, PxyBot, and ProxyBot (verbal conversations about these accounts are maddening 😆) and documented what I've found here and on phab:T166817. I think proactively blocking open proxies a smart move, and just requires some agreement from stewards and others who manage abuse across wikis. As for blocking by UserAgent and Device ID — in the new year I'll be meeting with the WMF's legal department to check if we'll be able to capture this data in accordance with our privacy policy. Cookie blocking anons has already passed legal, it's just a matter of writing the software. I have some notes on requiring email addresses, which I'll post in the section below. — Trevor Bolliger, WMF Product Manager 🗨 23:29, 19 December 2017 (UTC)

- @TBolliger (WMF):

And obviously you only looked at English Wikipedia.You can also look at MalarzBOT.admin on Polish Wikipedia or OLMBot and QBA-bot on Russian Wikipedia which are also quite good (although with not that cool names) — NickK (talk) 00:49, 20 December 2017 (UTC)

- Thank you for the list of other bots. To be fair, ProcseeBot was the first recommendation, but I also looked at ProxyBot on French Wikipedia and PxyBot on Japanese Wikipedia. — Trevor Bolliger, WMF Product Manager 🗨 01:10, 20 December 2017 (UTC)

- @TBolliger (WMF): Sorry for making a wrong claim. The French one is Proxybot (ProxyBot is from English Wikipedia but is inactive), hence my confusion. PxyBot is Japanese indeed, thus you made a global research, sorry for my mistake — NickK (talk) 11:24, 20 December 2017 (UTC)

- Thank you for the list of other bots. To be fair, ProcseeBot was the first recommendation, but I also looked at ProxyBot on French Wikipedia and PxyBot on Japanese Wikipedia. — Trevor Bolliger, WMF Product Manager 🗨 01:10, 20 December 2017 (UTC)

- @TBolliger (WMF):

- @NickK: @Stryn: @Crystallizedcarbon: Thank you all for sharing your thoughts! I've looked into ProcseeBot, PxyBot, and ProxyBot (verbal conversations about these accounts are maddening 😆) and documented what I've found here and on phab:T166817. I think proactively blocking open proxies a smart move, and just requires some agreement from stewards and others who manage abuse across wikis. As for blocking by UserAgent and Device ID — in the new year I'll be meeting with the WMF's legal department to check if we'll be able to capture this data in accordance with our privacy policy. Cookie blocking anons has already passed legal, it's just a matter of writing the software. I have some notes on requiring email addresses, which I'll post in the section below. — Trevor Bolliger, WMF Product Manager 🗨 23:29, 19 December 2017 (UTC)

- The measures used by Panopticlick more or less breaks down if the user invoke private browsing, especially for those browsers that has some kind of stealth mode where they avoid firngerprinting. In general this has no good solutions, the only solution that really works is to be able to set a protection level for an article or group of articles to «identified users». There is another solution that partially works, and that is to fingerprint the user instead of the browser. Problem is that we need a history for the user to be able to detect abuse, which more or less breaks down. One solution that do work, but not at a primary level, is to increase the cost of creating new accounts. There are several methods to do that, one is simply to let logged in users get more creds over time so they for example might post on users pages or create pages in the main space. That means a new account simply might not solve the problem when they want to avoid a block.

- A typical user fingerprinting technique is to do a timing analysis of typing patterns. This can be done in a secure way by adding some known noise to the patterns, and it can even be made so it is obfuscated and/or encrypted in the database. One way to use it could be to check if an unknown contributor on a page is infact a previous contributor. The answer would only be a probability, but together with sentiment analysis it could give a very clear indication that something weird is going on. An other interesting implementation is to use this for a rolling autoblock. If someone is blocked for a short time then that users fingerprint is added to a rolling accumulated pattern. It is possible to check all active users against such a pattern in real time. This similar to what is done in a spread spectrum radio. — Jeblad 00:08, 27 December 2017 (UTC)

- @Jeblad: Thank you for your comments. By 'typing patterns' do you mean how quickly (or slowly) they type on their keyboard? Or something else? — Trevor Bolliger, WMF Product Manager 🗨 01:07, 3 January 2018 (UTC)

- @TBolliger (WMF): There are several such systems, but the most common one uses typing delays between individual pairs of characters. Because it takes time to build such typing patterns it only works for accounts with a history, so the "cost" by creating a new account should be sufficiently high to prevent people from creating throw-away accounts. — Jeblad 11:31, 3 January 2018 (UTC)

- @Jeblad: Thank you for your comments. By 'typing patterns' do you mean how quickly (or slowly) they type on their keyboard? Or something else? — Trevor Bolliger, WMF Product Manager 🗨 01:07, 3 January 2018 (UTC)

Problem 2. Aggressive blocks can accidentally prevent innocent good-faith bystanders from editing

[edit]| Previous discussions |

|---|

- For the first point, I think we should require all accounts to have their email address attached (and verified/confirmed) in their settings at all times before any other action taken, regardless how they registered. A specific email address should only be on one account and not on another, unless they remove it from their original account. Tropicalkitty (talk) 00:02, 14 December 2017 (UTC)

- I see now that this was mentioned by another individual earlier... Tropicalkitty (talk) 00:53, 14 December 2017 (UTC)

- Agree this has a lot of potential. We want to stop the 300 account sockfarms. Creating lots of email accounts would be a significant increased burden for those who do one account per job.

- Doc James (talk · contribs · email) 06:16, 14 December 2017 (UTC)

- I see now that this was mentioned by another individual earlier... Tropicalkitty (talk) 00:53, 14 December 2017 (UTC)

- Verifying accounts is a good idea. However we should also ignore dots (.) within the Gmail addresses due to technically evabraun@ and eva.braun@... are different addresses yet technically both of them are going to the same mailbox for Google. --Goo3 (talk) 08:18, 14 December 2017 (UTC)

- It's really not hard to create lots of email accounts, actually. You only need a "catch-all address" to get an unlimited number of addresses on a single domain. Google Apps offers that by default. And obviously you cannot limit the number of accounts on a domain without hurting legitimate email providers. Email in itself is just as unreliable as IPs for identifying sockpuppets.

- The solution I see here is to automatically block the "browser" (see Problem 1 above for identification methods) along with the user, including autoblocking new browsers from where the user might try to connect. The better we can identify the computer, the less collateral damage.--Strainu (talk) 00:41, 16 December 2017 (UTC)

- The stated issue (in the headline) is not a problem for us at svwp.Yger (talk) 09:01, 18 December 2017 (UTC)

- There is no perfect solution here. Requiring to confirm email may be a barrier for some people (I know Wikimedians with thousands of edits who do not have any email, i.e. they never used email at all). In addition, in some cases creation of multiple accounts from the same IP (I know a university network where everybody on the campus has the same IP) or even same browser (a public computer in a library) is legitimate — NickK (talk) 16:45, 18 December 2017 (UTC)

- Our Anti-Harassment Tools team has no preconceived notion of what we’re going to build — we legitimately want to see where the most energy and confidence exists for solving the problems with the existing blocking tools. I just want to chime in with my concerns about using email as a unique identifier.

- Requiring email confirmation for all users is a much larger question (and fundamentally goes against our privacy policy and mission.) This talk page isn’t the right space to debate that decision.

- However, it has been proposed that we build the functionality that accounts can be created and used within an IP range block if (and only if) they have a unique confirmed email address linked to their account. This presents its own set of problems, none of which are insurmountable (but they do add up.) Because creating a throwaway email account is dead simple, we’d likely need to build a whitelist/blacklist for supported email domains. We’d need to build a system that strips periods and plus-sign postpended strings. We’d also need to build a way to check if the email address is unique or already in use by one of the existing millions of accounts.

- This seems like a lot of effort to limited effect. Gmail accounts can be created in under 30 seconds, bypassing all these checks. But as some have said, if creating a sock takes 30 seconds longer it might be just enough of a time deterrent. — Trevor Bolliger, WMF Product Manager 🗨 23:40, 19 December 2017 (UTC)

- This will also probably mean introducing rules per mailing server: Gmail might have one set of rules, Yahoo might have another one, and my own server will redirect any unique email to server admin, i.e. me.

- I do agree the problem exists, but the main reason of this problem is that we have to implement aggressive blocks as we do not have any better solution. Having better solutions would reduce the number of blocks that are too aggressive — NickK (talk) 00:41, 20 December 2017 (UTC)

- TBolliger (WMF), I apologise for the input if it would break those policies. I don't know what else to say about this specific problem. Tropicalkitty (talk) 18:49, 20 December 2017 (UTC)

- There's definitely no need to apologize. These are difficult problems to solve and all ideas deserve a fair opportunity for consideration. — Trevor Bolliger, WMF Product Manager 🗨 22:44, 20 December 2017 (UTC)

- Twinkle is mentioned, but in some projects like eswiki it is not functional. Huggle is a very popular and effective tool against vandalism, most of the times it does keep track of the number of warnings issued and increases the level of the templates until the maximum is reached and then it automatically allows for posting to WP:AIV for enwiki and other similar noticeboards for other projects. It is a powerful tool, but it does not always keep the right track of the warning level. In the Spanish project many times it keeps posting the level 1 warning over and over which forces patrollers to have to stop and do a manual report. --Crystallizedcarbon (talk) 18:56, 19 December 2017 (UTC)

- We'd be interested in making improvements to Twinkle or Huggle, if there is support from the communities that use it most or that want it most! Personally, I think that a tool that automates warning and blocks would help both in terms of admin productivity, but also in helping set consistent fair blocks, when blocks are appropriate. — Trevor Bolliger, WMF Product Manager 🗨 23:40, 19 December 2017 (UTC)

- I would be glad if someone could make Twinkle easy to be configured, or even better, make it an opt-in-tool for Wikimedia wikis. The new wikitext editor (2017) can't support fi:Järjestelmäviesti:Edittools.js (includes all warning templates, block messages and important messages that can be used on articles) which is shown below the edit window in old editor. Twinkle would help. Stryn (talk) 16:03, 20 December 2017 (UTC)

- We'd be interested in making improvements to Twinkle or Huggle, if there is support from the communities that use it most or that want it most! Personally, I think that a tool that automates warning and blocks would help both in terms of admin productivity, but also in helping set consistent fair blocks, when blocks are appropriate. — Trevor Bolliger, WMF Product Manager 🗨 23:40, 19 December 2017 (UTC)

- Disable range blocks, they are completely defunc as it is now. Use closeness in IP-address space to other trolls (aka build an IP-range) to identify which requests to inspect, and use timing analysis to locate those that should be given a temporary ban. An even better solution would be to do a cooccurence analysis on troublesome IP-addresses, to identify how the IP-addresses changes when an address is blocked. That would even identify how addresses are reassigned inside an ISP. [An IP-range together with detection of physical location could work. Location can be found by timing analysis on requests to servers at different physical locations.] — Jeblad 00:24, 27 December 2017 (UTC)

- Good suggestions, thank you. However, it is possible to spoof IPs and network speeds via proxy networks or other tactics. — Trevor Bolliger, WMF Product Manager 🗨 01:14, 3 January 2018 (UTC)

- Wide-range IP based blocks are a huge problem. I meet several times people who wanted to contribute but couldn't because there IP lies close to the IP-Range of some school, etc. I'm happy that finally someone is looking into improving this! But please be care full to not close out other people! Not anyone has an email-Address! (Yes, really and the number is growing again due to kids nowadays not using mail but whatsapp, etc.) We also have already the problem, that when doing a big Wikipedia-Workshop we run into the account creation Throttle! (So make ways to go around them in legit cases!) What I would propose would be to allow setting a ip-range to semi-blocked, meaning you have to create an account and verify you mail-address to edit. But let adding a mail optional for the normal IP-Ranges. For the future we would need even more flexible and intelligent systems. -- MichaelSchoenitzer (talk) 15:44, 31 December 2017 (UTC)

- @MichaelSchoenitzer: Good point about not everyone having an email address — the way people communicate and exist online is changing. What do you mean by more flexible and intelligent systems, and why wouldn't we build them now if we have the opportunity? — Trevor Bolliger, WMF Product Manager 🗨 01:14, 3 January 2018 (UTC)

- With more flexible I mean that we do not only have blocked and not-blocked IP-addresses and users may do nothing or anything respectively but have more fine-grained options. From some ip-range one may not edit as IP but may create an account, for another you might need to fill out a captcha for others you may even need to add a verified e-mail and the maximal amount of accounts created by day from one IP-Address might be 1 for some ranges and 100 for others. Right now some Wikipedias use machine-learning to prevent spam & vandalism, others flaged revisions, other use only the ip-blocking system. In the future we could have a combinations of all three systems they should not work side-by-side but together as one system. With more intelligent I mean, that admins should not have to worry about having to set all these options, but the system could intelligently pick them itself, blocking ip's automatically after to many reverts, etc. and the human would be "only" the control instance, overruling decisions mad by software. That's my vision. But to get there you'd need to do a lot at the software, do a lot of research and do a lot of (not always easy) discussion with the community. -- MichaelSchoenitzer (talk) 13:14, 6 January 2018 (UTC)

- @MichaelSchoenitzer: Thank you for explaining. I agree that in the long-long-term it would be ideal if the system could evaluate the optimal length and technical tactics of which to prevent disruptive users from returning to the wiki, while limiting potential collateral damage. And I agree that all these tools should work as one system — if this talk page discussion leads to a new type of block we will want to best build it into existing tools and workflows without getting in the way. — Trevor Bolliger, WMF Product Manager 🗨 17:29, 8 January 2018 (UTC)

- With more flexible I mean that we do not only have blocked and not-blocked IP-addresses and users may do nothing or anything respectively but have more fine-grained options. From some ip-range one may not edit as IP but may create an account, for another you might need to fill out a captcha for others you may even need to add a verified e-mail and the maximal amount of accounts created by day from one IP-Address might be 1 for some ranges and 100 for others. Right now some Wikipedias use machine-learning to prevent spam & vandalism, others flaged revisions, other use only the ip-blocking system. In the future we could have a combinations of all three systems they should not work side-by-side but together as one system. With more intelligent I mean, that admins should not have to worry about having to set all these options, but the system could intelligently pick them itself, blocking ip's automatically after to many reverts, etc. and the human would be "only" the control instance, overruling decisions mad by software. That's my vision. But to get there you'd need to do a lot at the software, do a lot of research and do a lot of (not always easy) discussion with the community. -- MichaelSchoenitzer (talk) 13:14, 6 January 2018 (UTC)

- @MichaelSchoenitzer: Good point about not everyone having an email address — the way people communicate and exist online is changing. What do you mean by more flexible and intelligent systems, and why wouldn't we build them now if we have the opportunity? — Trevor Bolliger, WMF Product Manager 🗨 01:14, 3 January 2018 (UTC)

- I've brought it up before, though hell if I remember where the Phab ticket is at, but allowing rangeblocks to only affect certain useragents can really reduce some of the harm of our blocks on the English Wikipedia. There are some cases where it wouldn't work, but quite a few where it would. -- Amanda (aka DQ) 21:18, 9 January 2018 (UTC)

- Here you go: phab:T100070. I think it's a pretty good suggestion. — Trevor Bolliger, WMF Product Manager 🗨 21:46, 9 January 2018 (UTC)

Problem 3. Full-site blocks are not always the appropriate response to some situations

[edit]| Previous discussions |

|---|

|

- One of the ways that the Dutch WP-AC has effectively minimized problems with certain users is to set a maximum numbers of contributions per day for a namespace for a user. It would be great if this could be captured in a block type. Whaledad (talk) 22:10, 8 December 2017 (UTC)

- The way the Dutch language Arbitration Comission handles those enforcements is wholly unproductive as was with the case w:nl: Wikipedia:Arbitragecommissie/Zaken/Blokkade JP001 where a user was allowed only a maximum number of 10 (ten) edits a day but was given a life-long block that would only be given the opportunity to be appealed to in at least 6 (six) months, cases like this aren’t described anywhere in any rule or guideline in Dutch Wikipedia but are still enforced, the Arbitration Commission doesn't exist to either uphold the preventive nature of blocks and basically cannot really be appealed to for ublocking/unbanning (when was the last time they actually unblocked anyone?), it only exists to sanction editors and though I agree that specialised blocking would help enforce these, why are users being permabanned for making 11 (eleven) non-disruptive edits? The ban-happy culture should be addressed as well, and having specific block settings may be a first step in this direction the current focus of the administrative elite and the Wikimedia Foundation staff is not on editor retention or even content improvement but on making sure that people can’t edit. No actual troll has ever had any issue with evading their blocks, but good faith editors like JP001 are the victims of a culture that justifies permanently getting rid of good faith editors with the excuse that their measures exist only to stop abusers they know they can’t ever get rid of. What baffles me is how little the moderators (or admins) of Dutch Wikipedia actually have to abide by policy, non-vandals are by the rules and guidelines protected from indefinite blocks for such small misdemeanors by the “verhogingsdrempel” but the moderators seem to believe that for certain editors the rules shouldn't apply and a permaban is in place. JP001 did not harass anyone or vandalised anything, but is being punished harsher than those that actually disrupt the project. --Donald Trung (Talk 🤳🏻) (My global lock 🔒) (My global unlock 🔓) 12:16, 11 December 2017 (UTC)

- Site-bans should be a last resort and not a first, and good faith users who made some mistakes or got angry one day shouldn't be given life-long bans over it, blocking users from accessing others' talk pages and/or create edit summaries would help more if those users have never made a disruptive mainspace bad edit in their lives. --Donald Trung (Talk 🤳🏻) (My global lock 🔒) (My global unlock 🔓) 12:34, 11 December 2017 (UTC)

Strong support Blocks are used as prevention rather than punishment. If it was allowed to block a user from editing in a specific namespace, many users who contribute a lot to articles while persistently making personal attacks on talk pages would not be forced to leave our site. --Antigng (talk) 17:05, 13 December 2017 (UTC)

Strong support Blocks are used as prevention rather than punishment. If it was allowed to block a user from editing in a specific namespace, many users who contribute a lot to articles while persistently making personal attacks on talk pages would not be forced to leave our site. --Antigng (talk) 17:05, 13 December 2017 (UTC)

- @Antigng: In all my reading of various noticeboards and reported cases of harassment, I have repeatedly seen this damned-if-you-do damned-if-you-don't dilemma with site blocks for incivil yet productive users: either the content suffers by blocking them or the atmosphere of civility suffers by not blocking them. — Trevor Bolliger, WMF Product Manager 🗨 22:42, 15 December 2017 (UTC)

- Thanks for kicking this discussion off! As an admin on English Wikipedia and having observed issues in some other, smaller communities I think the crux of the issue is that the current ban hammer is "all or nothing". Topic bans (applying to a page or category) are already a policy on many large Wikipedias like English, and being to able to enact these in code would be a great way to pave the cowpaths and allow for more flexible blocking while retaining more contributors. Being able to apply more specific types of bans might also help discourage use of sock puppets to evade bans. Steven Walling • talk 23:07, 13 December 2017 (UTC)

- Thank you, @Steven Walling: for jumping in! Great analogy. On English Wikipedia we've found that topic bans are nebulous by design ("broadly construed") so applying a simple page/category/namespace block wouldn't be the 1:1 same. Once we winnow this laundry list of possible block improvements to a manageable few, we will explore how they would work with/against existing policies on the largest wikis. But in general I agree — the blockhammer is too strong for all situations. — Trevor Bolliger, WMF Product Manager 🗨 22:42, 15 December 2017 (UTC)

In terms of solutions in this problem, I agree with all the points (at the time leading to up to this post) except for blocking the contributions (if it's on one user that can't see contributions from all users from a project). Tropicalkitty (talk) 00:13, 14 December 2017 (UTC)

- @Tropicalkitty: By 'blocking the contributions' do you blocking a user's ability to view Special:Contributions (like this example)? Or something larger? — Trevor Bolliger, WMF Product Manager 🗨 00:24, 14 December 2017 (UTC)

- Yeah that's what meant by it. Tropicalkitty (talk) 00:27, 14 December 2017 (UTC)

- Thanks for clarifying! (I agree with you, it seems like a clumsy solution and against the ethos of a collaborative system.) — Trevor Bolliger, WMF Product Manager 🗨 00:41, 14 December 2017 (UTC)

- Yeah that's what meant by it. Tropicalkitty (talk) 00:27, 14 December 2017 (UTC)

- I do not recognize this as a big issue for us at svwp. An unserious user mostly also discuss in conflict with our etiquette, so will be blocked also for that reason.Yger (talk) 09:05, 18 December 2017 (UTC)

Strong support. In Ukrainian Wikipedia we heavily rely on this option but we implement it using AbuseFilter which is not a very suboptimal solution. There are many possible options, including block from editing a namespace (e.g. user cannot edit templates), from editing a group of pages (e.g. user cannot edit articles about living politicians), from editing a specific page (e.g. user cannot edit a page where they were engaged in an edit war) or vice versa (e.g. user can edit only a specific page: they violated rules but had to prepare a page of an offline event at the same time). All the examples above are based on real-life cases. We really need a good tool for these cases — NickK (talk) 16:51, 18 December 2017 (UTC)

Strong support. In Ukrainian Wikipedia we heavily rely on this option but we implement it using AbuseFilter which is not a very suboptimal solution. There are many possible options, including block from editing a namespace (e.g. user cannot edit templates), from editing a group of pages (e.g. user cannot edit articles about living politicians), from editing a specific page (e.g. user cannot edit a page where they were engaged in an edit war) or vice versa (e.g. user can edit only a specific page: they violated rules but had to prepare a page of an offline event at the same time). All the examples above are based on real-life cases. We really need a good tool for these cases — NickK (talk) 16:51, 18 December 2017 (UTC) Support. Estonian Wikipedia has low threshold of notability and some users write articles about themselves / their companies. Estonian wiki needs blocking option, which does not allow to write about yourself. Taivo (talk) 18:56, 18 December 2017 (UTC)

Support. Estonian Wikipedia has low threshold of notability and some users write articles about themselves / their companies. Estonian wiki needs blocking option, which does not allow to write about yourself. Taivo (talk) 18:56, 18 December 2017 (UTC) Comment Topic ban, namespace ban... all that goes in the good direction IMO, I would like to share the ideas on possible options that cross my mind:

Comment Topic ban, namespace ban... all that goes in the good direction IMO, I would like to share the ideas on possible options that cross my mind:

- Blocking range

- Area range

- Full site (by default)

- Namespace

- Topic (categories? block the user to edit everything inside a specific category?? why not?)

- Single page ban

- possibility to add several pages, with an option for each pages to include their sub-pages or not

- ...

- Edit range (prevent the user to do some actions)

- Revert

- Upload

- Use one or several specific tools of the project

- ...

- Area range

- But there will always be the same problem of potential block evasion attempts, therefore this problem is closely linked to the problem 1 above, and limited blocks, whatever they are, must be by default accompanied by the usual precautions (prevent account creation, apply same limited block to last IP address used by this user, and any subsequent IP addresses they try to edit from). --Christian Ferrer (talk) 20:13, 18 December 2017 (UTC)

- @Taivo: @NickK: @Christian Ferrer: Thanks for the your thoughts, support, and context. I think providing a more granular blocking tool would offer a wide range of benefits for helping nip edit wars, harassment, POV conflicts, and other forms of user misbehavior in the bud. My biggest concern, though, is over-engineering a system. In my mind, just implementing per-page blocking (assuming one user can be blocked from multiple pages with different expirations) will deliver most of the benefit. If you had to prioritize just one or two to build, what would it be? — Trevor Bolliger, WMF Product Manager 🗨 00:09, 20 December 2017 (UTC)

- @TBolliger (WMF): If I had to choose one thing it would be per-user AbuseFilter. AbuseFilter can implement most of these (block from editing a page, a namespace, all pages but a given one etc.) but using AbuseFilter applying to all others with conditions per user is too costly — NickK (talk) 00:35, 20 December 2017 (UTC)

- @NickK: Duplicating or extending the AbuseFilter would be a major undertaking, so it's unfortunately just outside the scope of what our team can build in our available time. — Trevor Bolliger, WMF Product Manager 🗨 01:15, 20 December 2017 (UTC)

- @TBolliger (WMF): Well, I thought it could have been easier as this would rely on the existing basis, i.e. using AbuseFilter core, instead of developing a completely new solution. If this is not possible banning a user from editing an individual page and/or a namespace would be my two priorities — NickK (talk) 12:46, 20 December 2017 (UTC)

- @NickK: Duplicating or extending the AbuseFilter would be a major undertaking, so it's unfortunately just outside the scope of what our team can build in our available time. — Trevor Bolliger, WMF Product Manager 🗨 01:15, 20 December 2017 (UTC)

- My preference would go to individual pages blocking with a possibility of cascading on the subpages. --Christian Ferrer (talk) 05:44, 20 December 2017 (UTC)

- @TBolliger (WMF): If I had to choose one thing it would be per-user AbuseFilter. AbuseFilter can implement most of these (block from editing a page, a namespace, all pages but a given one etc.) but using AbuseFilter applying to all others with conditions per user is too costly — NickK (talk) 00:35, 20 December 2017 (UTC)

- I really like this idea. I think it would be useful if it had some of the options suggested by Christian Ferrer. I alos like the idea of having the option for cascading by subpage and/or category. ···日本穣? · 投稿 · Talk to Nihonjoe 00:01, 17 January 2018 (UTC)

- @Taivo: @NickK: @Christian Ferrer: Thanks for the your thoughts, support, and context. I think providing a more granular blocking tool would offer a wide range of benefits for helping nip edit wars, harassment, POV conflicts, and other forms of user misbehavior in the bud. My biggest concern, though, is over-engineering a system. In my mind, just implementing per-page blocking (assuming one user can be blocked from multiple pages with different expirations) will deliver most of the benefit. If you had to prioritize just one or two to build, what would it be? — Trevor Bolliger, WMF Product Manager 🗨 00:09, 20 December 2017 (UTC)

- I believe the only way this could work is as a topic ban on a page, and if that page is a category then it should apply on every member of that category. — Jeblad 00:29, 27 December 2017 (UTC)

- I think the two priorities are the two opposites: individual page, and namespace. Topic bans at the enWP tend to inevitably lead to disputes about just where the boundaries are, and Wikiproject bans would have the problem that anyone can add a Wikiproject banner to a page. The other intermediate or specialized options are less critical. Being able to enforce bans programmatically is much better than doing it by Arbitration enforcement, which leads to continual complains of unfairness. Editfilter based schemes have the problem that using too many of them cause performance degradation--enWP already has most of the existing ones inactivated. I recognize that other wikis will of course have different needs DGG (talk) 20:49, 27 February 2018 (UTC)

Problem 4. The tools to set, monitor, and manage blocks have opportunities for productivity improvement

[edit]- I love and hate the warning count. I love it because some users tend to delete or archive warnings so that their talk page looks clean. I hate it because I saw people send warnings just to harass others. No strong opinion on other points — NickK (talk) 16:59, 18 December 2017 (UTC)

- I wonder if the option of indefinite bans should be removed, or moved to a higher level of admins. When a ban times out it could be set so a lower level type of admin could reset it, or even an ordinary user. One alternative could be that some bad-faith activity could automatically trigger a resetting of the ban, with an increasing restraint over time. This could even be activated by a warning to the user, thereby giving the user better reason to resolve the issue peacefully. This is also dangerous as the give real trolls a tool to silence opposition. — Jeblad 00:36, 27 December 2017 (UTC)

- I am curious if others will share their thoughts on these suggestions, but they seems to be a tough sell (eliminating indefinite blocks and requiring bureaucrats to set blocks.) I can see a world where a new usergroup is added who has training specifically for blocking, and I also like the concept of 'reset block' as a separate permission. I can also see the software asking "are you sure?" for indefinite blocks. But these changes will require a lot of per-wiki community agreement. — Trevor Bolliger, WMF Product Manager 🗨 01:20, 3 January 2018 (UTC)

Ottawahitech's perspective on this initiative

[edit]One cannot come up with solutions without first identifyIng the problem they are trying to fix. Introducing more complexity into an area that is already falling apart because of mass confusion and a lack of consistency will only make matters worse.

The English wikipedia now has tens of thousands of indefinitely blocked users. Blocked users are getting more and more common, and more and more productive good faith editors are labeled bad actors. This is happening with alarmingly increased regularity and with little discussion and sometimes without any evidence. More and more lynch mobs sitting at ANI are "prosecuting" other editors that they simply do not like. It is not unheard of to have long-term, prolific editors banned or blocked after a short discussion initiated by an editor no one has heard of before. Sometimes the editor does not get notified of the discussion in which they are blocked.

This whole area on the en-wiki is full of inconsistently applied rules and technical complexity. Not even long-term/clueful users know who is banned and who is blocked, what the difference is between these two, and how a a "community sanction" fits into this. There are no standards about how to label blocked users. Some indef-blocked users are not added to the category I mentioned above. Some blocked users are blocked/banned "in secret" and there is no mention of their block on their user pages (example)flong term. Some indef-blocked users cannot easily appeal their block when their talk page access is blocked by an admin without discussion. Other editors are not permitted to appeal on behalf of so-called bad actors.

No one knows how many have been blocked for the following reasons:

- Disruption (of what?)

- long term abuse

- Continuing to participate by proxy

- long term failure to abide by basic content policies

- ???

From previous wiki-experience I already know that my posting here will only get me into further trouble from shoot-the-messenger participants. I also know I will be told this is not the correct location for posting this - and my posting (which took a fair bit of effort) will be removed, sigh… Ottawahitech (talk) 17:32, 15 December 2017 (UTC) Please ping me

- @Ottawahitech: If you (or anybody) are concerned about retribution for your participation on this discussion we welcome you to email us your thoughts directly, in which case we'll include your input in our bi-weekly summaries but not attribute your username.

- We are looking at improvements to ANI and other Harassment reporting workflows. Our first step is to analyze the results of a recently-run survey about ANI. The WMF's Anti-Harassment Tools team is predominantly focusing on building blocking tools for the first half of 2018 but the second half is reserved for building an improved reporting system. Our preliminary research is already underway.

- I agree that blocks are serious and should be more traceable to an actual reason. This is why we included Problem 4 on this discussion. Do you have any thoughts on how Special:Block could be changed to ensure that blocks are more transparent and consistent? — Trevor Bolliger, WMF Product Manager 🗨 00:37, 20 December 2017 (UTC)

- @TBolliger (WMF): thank you for pinging me, however your message above mystifies me:

- results of a recently-run survey about ANI: I followed your link and the only survey I found mentioned on that page is a survey of Administrators, not a survey of the general population of the en-wikipedia.

- If you (or anybody) are concerned about retribution: My concern was that my input would be removed from this page. My comments have been removed from talk-pages over the years when I tried to express a dissenting view (en-wiki being silenced edit summaries).

- Do you have any thoughts on how Special:Block: I have no idea what Special:Block does. When I click on it, it informs me that I have committed a permission error (and is probably causing me to be logged somewhere and added to the so-called badactors list?)

- I also note that the WMF has apparently not detected the groundswell of distrust of admins by non-admins. Why are you building tools that further empower wiki-admins in subduing non-admins? (Example:Features that surface content vandalism, edit warring, stalking, and harassing language to wiki administrators and staff). Why not build tools that help both harassed non-admins and harassed admins defend themselves? Ottawahitech (talk) 10:24, 27 December 2017 (UTC) Please ping me

- @Ottawahitech: Special:Block is the blocking tool; clicks on it are not logged except maybe in the servers, not accessible to anyone but sysadmins. Jo-Jo Eumerus (talk, contributions) 10:46, 30 December 2017 (UTC)

- @Ottawahitech: Sorry for the wrong link, I updated the page I linked-to to point to also point here. As Jo-Jo mentioned, Special:Block is the page used to set blocks. If you are not an admin then you will see a 'permission denied' error page. Attempting to view this page does not log your username for any future purposes, it's just like viewing any other nonexistent page. — Trevor Bolliger, WMF Product Manager 🗨 01:41, 3 January 2018 (UTC)

- @TBolliger (WMF): thank you for pinging me, however your message above mystifies me:

- I don't think admins should be able to indefinitely block users, and they should not be able to block users from appealing stupid bans. There are a lot of admins that has no clue and use their ability to block other users as a d**k-extension. The system should be made in such a way that blocking good-faith editors comes with a cost. If the cost is acceptable for the admin, then they should block. If the cost is not acceptable, then they don't. Now the cost is non-existent, and that makes it to tempting to block other users. This is really about what the users gain by good behaviour, and the cost with bad behaviour. Now the system are without any type of cost at all. — Jeblad 00:45, 27 December 2017 (UTC)

- @Jeblad: I don't think admins should be able to indefinitely block users: If you mean without due process I would certainly support that. I would also add: stop admins from deleting/blanking userpages of blocked users without due process. Ottawahitech (talk) 10:44, 27 December 2017 (UTC) Please ping me

- @Ottawahitech: I agree. Easiest solution would be to make this a bureaucrat-only right. — Jeblad 19:49, 27 December 2017 (UTC)

- I replied to your proposals about eliminating indefinite blocks and requiring bureaucrats to set blocks in the "Problem 4" section above. There is already a cost associated with blocking as with any other administrative action: all actions are publicly logged and can be reviewed by other users. Their reputation is the cost. There are many admins across all wikis and some certainly wield their status and abilities with more responsibility than others, but I do not see this as "empower[ing] admins in subduing non-admins." There are certainly some malicious users who should be entirely prevented from participating at any point in the future, and there are certainly some disruptive users who should have their behavior addressed but they should be retained for their future contributions.

- I think the Block tool could potentially add a check for unnecessarily long blocks, such as by requiring the block reason first and for admins to explicitly state why they are setting a longer block than the system default. We'll need to make sure this doesn't cause too much of a burden on existing workflows, as these tools should serve all users in the end. — Trevor Bolliger, WMF Product Manager 🗨 01:41, 3 January 2018 (UTC)

Blocked user continues harassment on other versions

[edit]At svwp we have had problem with users explictly harassing other collegues, where the effeced users also on their talkpage indicate they are fragile. Of course we blocked the user harrasing other users. But these then went on to meta and on its Requests for comment continued their harassemnt and specially the collegues special fragile part. And we were very unhappy, we could not stop the case on meta. It did of course die down there, but a moments we were not only worried that the harrased users would quit but also if it could effect their physical wellbeing. Could we please find a procedure to stop harrassment from other versions? Yger (talk) 09:20, 18 December 2017 (UTC)

- I have seen somewhat similar cases on Ukrainian Wikipedia: a user having an interaction ban circumvented it by harassing these users on other wikis (e.g. a user banned on Ukrainian Wikipedia uses Russian Wikipedia to contact a ukwiki user or vice versa) — NickK (talk) 16:55, 18 December 2017 (UTC)

- @Yger: @NickK: Cross-wiki harassment is indeed a problem. The Anti-Harassment Tools team has made commitments to build tools specifically to address this problem in 2018 or 2019, both by empowering Stewards with better tools and also by implementing better safeguards for individual users on wikis where they don't frequently edit.

- Users can be locked from their account if they commit serious cross-wiki trouble. We could build global blocks if there is strong support, and I believe it might be helpful if block information from one wiki is displayed on other wikis if the user is currently blocked. What do you think would be the most effect counter-measures that we could build? — Trevor Bolliger, WMF Product Manager 🗨 00:56, 20 December 2017 (UTC)

- We have a set of users being blocked on svwp for POV and etiquette who continues on enwp. This is an irritation, as they enter biased info on enwp which need detailed knowledge to be aware of. But still, in those cases I think we must accept all wikis are autonomous, so I do not see broad blocking as something wanted. I am only talking of serious harassement that could cause harm on the harrassed part IRL. I beleive what is needed is a procedure for global blocks in cases of serious harrasement. Such a thing should be chanelled through meta (and the global stewards). But I actully think these cases needs to be handled offwiki, peoples wellbeing are at risk and prolonged discussion and details of the IRL frailment, would be even more harmful for the attacked person.Yger (talk) 09:31, 20 December 2017 (UTC)

- I also want to high-light that svwp is a small community. It makes it possible to be treat contributors on an indivdual basis. If someone states being on sick leave beacause of burnout or a specific physcic ailment, we can make sure we allow for deviations in behaviour that can occur. And also be extra sensitive to bad behaviour towards these. So what we tolerate in harsh behaviour (and haressment) can be less then on the bigger sites including meta.Yger (talk) 11:27, 20 December 2017 (UTC)

(Out-dent) @Yger: @NickK: Unfortunately it seems that this is happen more with users from smaller wikis. The global bans process was created to address some aspects of this issue but has never been widely used. There is the potential for the wikimedia community to consider more improvements. Right now there is a community request for comment happening about some possible improvements.

While this current discussion is more related to software development for tools, part of the Wikimedia Foundation's Community health initiative's work is to support the community as it considers policy changes that might make the wikis more welcoming. We can capture these ideas for future discussions in 2018 when the topic will come up again. SPoore (WMF) (talk) , Community Advocate, Community health initiative (talk) 04:15, 23 December 2017 (UTC)

- @SPoore (WMF): Just to clarify, I do not mean that global bans are a good solution. The cases I mentioned concern users who have overall rather good contributions and are not blocked in home wikis but are often banned. For example, user B in Ukrainian Wikipedia has a ban on interactions with user A, thus they use A's talk page in Russian Wikipedia to send them not quite friendly messages. I don't think it is bad enough to deserve a global ban but probably bad enough to need an action — NickK (talk) 04:23, 23 December 2017 (UTC)

- I believe this can be handled by a global credit system. You do GoodThings™ you get credits. You do BadThings™ you loose credits. And make the credits visible to other users. Then it won't be invisible what someone do on a small project. Problem is that some of the bad things are really processes on the wikis, like deletion requests. Users are actually harassing each other by systematically going after articles and deleting them. Should you then give the user credits for doing some work, like deleting the article, or take credits from them because they are harassing someone by deleting their article? — Jeblad 00:56, 27 December 2017 (UTC)

- How do we prevent factionalism, people giving upcredits to friends and removing them from enemies? And we delete lots of articles because they are spam, vandalism etc.; should deleting such stuff result in downcredits? Jo-Jo Eumerus (talk, contributions) 18:44, 4 January 2018 (UTC)

- If you only have a limited amount of credits there will be a cost however you are using them. It is also possible to give less credits to those that routinely give credits against mean vote. You want to give them some credits, but not to much, or you can weight the credits. Several alternatives are described in various papers, but the general idea is that you need some cost function. — Jeblad 17:04, 20 January 2018 (UTC)

- How do we prevent factionalism, people giving upcredits to friends and removing them from enemies? And we delete lots of articles because they are spam, vandalism etc.; should deleting such stuff result in downcredits? Jo-Jo Eumerus (talk, contributions) 18:44, 4 January 2018 (UTC)

Summary of feedback received to date, December 22, 2017

[edit]Hello and happy holidays!

I’ve read over all the feedback and comments we’ve received to date on Meta Wiki and English Wikipedia, as well as privately emailed and summarized it in-depth on the talk archive page (along with archiving some sections.) We’re looking for non-English discussions and users willing to help translate, and will provide a feedback of those discussions in January.

Here is an abridged summary of common themes and requests:

- Anything our team (the Wikimedia Foundation’s Anti-Harassment Tools team) will build will be reviewed by the WMF’s Legal department to ensure that anything we build is in compliance with our privacy policy. We will also use their guidance to decide if certain tools should be privileged only to CheckUsers or made available to all admins.

- UserAgent and Device, if OK’d by Legal, would deter some blocks but won’t be perfect.

- There is a lot of energy around using email addresses as a unique identifiable piece of information to either allow good-faith contributors to register and edit inside an IP range, or to cause further hurdles for sockpuppets. Again, it wouldn’t be perfect but could be a minor deterrent.

- There was support for proactively globally blocking open proxies.

- Some users expressed interest in improvements to Twinkle or Huggle.

- There is a lot of support for building per-page blocks and per-category blocks. Many wikis attempt to enforce this socially but the software could do the heavy lifting.

- There has been lengthy discussion and concern that blocks are often made inconsistently for identical policy infractions. The Special:Block interface could suggest block length for common policy infractions (either based on community-decided policy or on machine-learning recommendations about which block lengths are effective for a combination of the users’ edits and the policy they’ve violated.) This would reduce the workload on admins and standardize block lengths.

- Any blocking tools we build will only be effective if wiki communities have fair, understandable, enforceable policies to use them. Likewise, what works for one wiki might not work for all wikis. As such, our team will attempt to build any new features as opt-in for different wikis, depending on what is prioritized and how it is built.

- We will aim to keep our solutions simple and to avoid over-complicating these problems.

- Full summary can be found here.

The Wikimedia Foundation is on holiday leave from end-of-day today until January 2 so we will not be able to respond immediately but we encourage continual discussion!

Thank you everyone who’s participated so far! — Trevor Bolliger, WMF Product Manager 🗨 20:19, 22 December 2017 (UTC)

- Gave some comments on the enwiki page on this (mainly, because I saw your post there first). Jo-Jo Eumerus (talk, contributions) 10:44, 23 December 2017 (UTC)

Limit cookie blocks to 1 year only

[edit]Limit cookie blocks to a year maximum, I know that the Wikimedia Foundation won’t take anything any blocked user says serious and that this will land on deaf ears, but this culture of keeping editors who genuinely want to improve content away needs to end. Cookie blocks are by far the worst offender of this, they don't stop disruptive behaviour or malicious sockpuppetry, they only stop people with no intent on disruption from ever editing. Let's take a scenario where a user is new to Wikipedia but sees an error on a medical page, believing that this error could have negative real world consequences they remove this false information, another editor sees this as vandalism and reverts this without giving a reason, the user then removes the misinformation again and writes down why it is wrong, the other editor (usually a rollbacker or admin) reverts again and doesn't give a reason, because this is seen as “vandalism” the “established editor” (a title given based on a user’s edit count, not on any actual measurement of meaningful contributions) can revert as much as they want, as this new editor doesn't have much other edits to their name they will probably immediately face an indefinite block with no talk page access or way to appeal, either they don't know that the UTRS exists or they contribute to a project without a UTRS and they’re essentially banned for life. Maybe in time they learn more about how Wikipedia works, they finally figured out what a talk page is and a year later they still see that medical misinformation standing there, they try to go to the talk page and explain with a reliable scientific source why it's dangerous misinformation and then they hit their indefinite cookie block, why? Because they’re not allowed to edit for the rest of their life, and while real, actual vandals will just do whatever they want, users like this end up getting the heaviest punishments (because blocks are ALWAYS punitive, if they truly were preventive good content wouldn't get deleted solely based on evasion, or even delete pre-block content after a block solely based on a later block), this is why editor retention is shrinking and why women are reluctant to join. A hostile culture that exists because of hostile tools, instead of talking about how to expand the blocking tools, why not limit them so users aren’t site-banned? The aforementioned hypothetical user could've simply been blocked from the “Undo” button or from editing that specific page, but they are banned from the entire site, who does this help? Only the admin wanting to brag about their “500,000 log actions” or whatever, not the project and certainly not the readers. --Donald Trung (Talk 🤳🏻) (My global lock 🔒) (My global unlock 🔓) 11:07, 28 December 2017 (UTC)

- Cookie blocks are plain stupid. They are so easy to circumvent that it isn't even fun to point out how to do it. Remove it, it is simply "blocking by obscurity". — Jeblad 13:44, 28 December 2017 (UTC)

Harassment need a user masking system, not a blocking one

[edit]Considering than :

- w:Harassment concern one or few person having wrong behavior again an other person considered as the victim.

- Person(s) guilty of harassment could be in the same time useful and effective on other aspects in wikimédia projects.

- Blocking Wikimedia access as digital ostracism is a very strong punishment creating potential moral frustration and technical problem to other project users (disagreement or IP access blocked).

- Blocking decision are source of community conflicts.

l thing than allowing any user to hide the actions of any other user or IP account, except administrators one, seems much more appropriate than blocking Wikimedia access.

Advantages of hiding system are :

- Victims can them self dealing with the situation avoiding risk of community implication.

- Person(s) guilty of harassment will loose there harassing power without loosing there editing project one.

- The risk of blocking wrong IP by mistake disappears.

- Failure to respond is part of good practice to combat harassment.

It seems to me the concrete harassing solution in use on plenty of web platform as facebook, RBNB., etc. Why not on MetaWiki platform ?

Lionel Scheepmans ✉ Contact French native speaker, sorry for my dysorthography 15:08, 29 December 2017 (UTC)

- "Not technically or socially feasible" would be the problem I think. Page histories and logs would become a total mess if one could hide a particular person's actions in them. Also, a number of people mistake disagreement or critique of bad editing practices for harassment. This idea works for a social media platform, not for projects like encyclopedias that have general obligations for readers and whose participants thus need to be held responsible for their contributions to a degree rather than being allowed to hide other people's comments on them away. Jo-Jo Eumerus (talk, contributions) 10:02, 30 December 2017 (UTC)

- @Lionel Scheepmans: Thank you for your comments! I agree with Jo-Jo that some of this will be incredibly complex to execute on article pages and histories, but I can see room for this on talk pages, userpages, notifications, and other forms of communicating on wiki. Our team has built two Mute features, and it has been proposed we extend this to more aspects of the wiki. (I'm particularly curious about building a system that collapses all talk page comments made by Muted users — some users on a popular Wikipedia have already built a system in their personal JavaScript...) If you have more comments on this, I would encourage you to collect and share them on Talk:Community health initiative/User Mute features. Thank you! — Trevor Bolliger, WMF Product Manager 🗨 02:04, 3 January 2018 (UTC)

- Thanks for replies. I understand. I will take a look layer.

- Masking the rolled back contributions would increase the readability of the history a lot, without being very hard or difficult to implement. — Jeblad 23:41, 17 January 2018 (UTC)

- I don't think hiding histories is very useful as most people read the page content directly. And what about the other problem I mentioned. Jo-Jo Eumerus (talk, contributions) 09:56, 18 January 2018 (UTC)

- I only commented on rolled back contributions. It is pretty straight forward to do. The rest of your post is about interpretation of critique, but intermingled with style of writing, which is not actionable thus I do not want to comment on that. — Jeblad 16:48, 20 January 2018 (UTC)

- I don't think hiding histories is very useful as most people read the page content directly. And what about the other problem I mentioned. Jo-Jo Eumerus (talk, contributions) 09:56, 18 January 2018 (UTC)

- Masking the rolled back contributions would increase the readability of the history a lot, without being very hard or difficult to implement. — Jeblad 23:41, 17 January 2018 (UTC)

- Thanks for replies. I understand. I will take a look layer.

- @Lionel Scheepmans: Thank you for your comments! I agree with Jo-Jo that some of this will be incredibly complex to execute on article pages and histories, but I can see room for this on talk pages, userpages, notifications, and other forms of communicating on wiki. Our team has built two Mute features, and it has been proposed we extend this to more aspects of the wiki. (I'm particularly curious about building a system that collapses all talk page comments made by Muted users — some users on a popular Wikipedia have already built a system in their personal JavaScript...) If you have more comments on this, I would encourage you to collect and share them on Talk:Community health initiative/User Mute features. Thank you! — Trevor Bolliger, WMF Product Manager 🗨 02:04, 3 January 2018 (UTC)

Lionel Scheepmans, why don't you change the title to "Embrace Harassment"? I totally disagree with your approach, in el.wikipedia the harassment comes directly from the admins, their actionS haVe indeed been hidden onwiki, but they are still admins, they have never said they are sorry and we have a ruined community. Is this your vision? ManosHacker talk 16:29, 23 February 2018 (UTC)

- ManosHacker, I understand your point of view and I got some problem with admin on fr.wikipedia to. But admin have de right to block people and unblock them self. So the fisrt step for admin arrasment is to withdraw administrative rights. But any way, hiding system don't match with historic page and so on, so that's not a good option. Finaly, the best way front of arrasement behavior is to denie them, never answer, and supress massages on your user or talkpage. Good luck in el.wikipedia ! Lionel Scheepmans ✉ Contact French native speaker, sorry for my dysorthography 10:41, 24 February 2018 (UTC)

- For the time being we have continuous leakage in productive users and admins, who are now missing from editing. I am not after the removal of rights, people make mistakes. If the harasser says he/she is sorry, in public, the offensive material is removed and the harasser states in public, that he/she will not repeat it again, then the basis is set for community health and there is no need for extreme punishment. There is no other way. ManosHacker talk 20:01, 24 February 2018 (UTC)

- It's a pity an experienced user to say lies reversing the reality. Please ManosHacker stick to the substance of your argument and not wander around saying inaccuracies that the other users are not able to check.--Diu (talk) 05:48, 27 February 2018 (UTC)

Perfection not required

[edit]A lot of the suggestions seem to get shot down using the argument that the bad user could get around it by doing SuchAndSuch. I agree that a very techsavvy bad user can evade a lot of proposed solutions, but most people are not that tech savvy. Many suggestions would be a barrier to some users, not all, but some. I don't think we should be looking for perfect solutions (because they probably don't exist) but for ones that would be effective against a lot of bad users. For some, we will still have to rely on human intuition as we currently do when we "smell a rat". Kerry Raymond (talk) 08:56, 3 January 2018 (UTC)

- @Kerry Raymond: I 100% agree. The old proverb "en:Perfect is the enemy of good" rings true here. — Trevor Bolliger, WMF Product Manager 🗨 22:28, 3 January 2018 (UTC)

Not all solutions need to be 100% technical, let's use people too

[edit]A lot of blocked users have particular topic interests (articles, categories) or particular dislikes (certain other users). Is it possible to do some automated analysis of a blocked user's "fingerprints" and proactively scan new account/IP behaviour against those fingerprints of recently blocked users and redflag those users who seem to match for closer human scrutiny of their edits and then draw these redflags to the attention of the users most likely to be willing and able to best monitor that new user's activities (e.g. those who have previously drawn attention to the previous bad behaviour, or perhaps to the WikiProject in which the blocked user was active). People are still better than machines at detecting patterns; I can spot a couple regular socks within a couple of edits (the pattern is just that obvious) but I can't do that in general. Could we have a tool so that someone have their watchlist extended with all the articles the blocked user has previously edited (perhaps with some time limit, or on the basis of "fingerprinting", to keep it manageable) so that user can easily spot the sock's return through their normal watch list. Kerry Raymond (talk) 08:56, 3 January 2018 (UTC)

- This proposal falls a little outside the scope of this talk page's discussion. Building tools for users to identify sockpuppets does fall under the purview of the Anti-Harassment Tools team, so in the future we may explore such an idea. In general, our team wants to build tools that empower humans to make better decisions. — Trevor Bolliger, WMF Product Manager 🗨 22:34, 3 January 2018 (UTC)

- But this is exactly what I am proposing?! Tools to make it easier for people to detect returning blocked users. For example, I had to add several dozen articles manually to my watchlist because of a recently blocked user, who I suspect will return as a sockpuppet/meatpuppet (a blocked paid user whose stated mission was to update a group of articles, so I am guessing that they will be back). Doing it manually was a big waste of my time. If we don't make it easier for good faith users to detect, monitor and report problem users, more people will do (as many already do) and just move on hoping someone else will deal with it. Kerry Raymond (talk) 00:43, 4 January 2018 (UTC)

- I have been operating that this discussion is about the blocking tools themselves, for use when the community/admin/steward/etc has decided "this user needs to be blocked" or in the case of Problem #3 "a full block is not appropriate but this situation needs to be addressed." But you are correct — your suggestion as an evaluative tool is another way to address Problem #1. Most of this work is done manually by interested users or CheckUsers, and the software could certainly help perform a lot of the repetitive evaluative work. — Trevor Bolliger, WMF Product Manager 🗨 18:31, 4 January 2018 (UTC)

- But this is exactly what I am proposing?! Tools to make it easier for people to detect returning blocked users. For example, I had to add several dozen articles manually to my watchlist because of a recently blocked user, who I suspect will return as a sockpuppet/meatpuppet (a blocked paid user whose stated mission was to update a group of articles, so I am guessing that they will be back). Doing it manually was a big waste of my time. If we don't make it easier for good faith users to detect, monitor and report problem users, more people will do (as many already do) and just move on hoping someone else will deal with it. Kerry Raymond (talk) 00:43, 4 January 2018 (UTC)

Hiding usernames of infinite-blocked vandal accounts

[edit]There is a problem [1] reported in pl.wiki right now about many usernames created by wikinger (I think that wikinger may created hundreds (thousands?) accounts till now, but not all of his accounts are problematic in this case). Many of these accounts are very similar (sometimes with irreverent or abusive insertions) to the accounts of other wikipedians. These usernames are visible to anyone and even after they are blocked, they are still an indirect means of harassing users by wikinger. This would be a solution, the other proposed in pl.wiki discussion is to rename problematic accounts.

By the way I can also menion here the previous problem with this vandal described in phab:T169268. Fortunately, this has been resolved by permanent change of max thanks for non-autoconfirmed users. But I think it should be kept in mind that similar situations may happen in the future on other wikis and maybe allowing communities to change such limit locally would be worth considering. Wostr (talk) 12:28, 4 January 2018 (UTC)

- The solution for Thanks spam can be applied to other wikis (or globally) if the problem spreads. And yes, when blocking an obvious attack username the software can make it easier to hide/rename the username to avoid future exposure in logs/histories. Good suggestion. — Trevor Bolliger, WMF Product Manager 🗨 18:34, 4 January 2018 (UTC)

Range contributions

[edit]While we are talking about about improving block solutions, I would also like to put attention to range contributions. We already have basic IP range support in Special:Contributions. That's all fine and dandy, but what's kind of missing here is a dedicated page for ranges only. What I mean with this is like the mock-ups in this ticket on Phabricator. This ticket and it's sub-tickets have been quiet now for half a year or more at this point. It would be nice if stuff like this would also be considered in the initiative, especially when IPv6 is getting common these days, making blocking harder for non-technical administrators. --Wiki13 talk 01:16, 7 January 2018 (UTC)

- One could perhaps put such range support in other features such as logs or Special:Nuke. Jo-Jo Eumerus (talk, contributions) 10:15, 7 January 2018 (UTC)

- @Jo-Jo Eumerus: I'm not 100% familiar with every aspect of Nuke (does it rollback edits from accounts associated with IPs or just the IP edits? Who is permissioned to use it? Does it warn/prevent Nukes for a large quantity of edits or over a certain period of time?) It's a powerful tool and if it had IP range support, one misplaced '0' could be gnarly. — Trevor Bolliger, WMF Product Manager 🗨 17:36, 8 January 2018 (UTC)

- @Wiki13: Range Contributions turned out to be a much bigger strain on databases than we originally planned, but it is certainly something we can look into for our 2018 blocking work. It certainly would be a way of addressing "Problem 1. Username or IP address blocks are easy to evade by sophisticated users". — Trevor Bolliger, WMF Product Manager 🗨 17:36, 8 January 2018 (UTC)

- @TBolliger (WMF): thanks for the answer and clarification. I'll be looking forward to the solutions you guys will be putting out in the coming few months, whatever those might be. --Wiki13 talk 17:45, 8 January 2018 (UTC)

Suggestion of an improvment of the way the time of a block is determined - from a discussion on de.WP

[edit]In the German language Wikipedia, a user was blocked, unblocked and blocked again several times in a very complicated case. In this process mistakes happened, because of problems with the way the length of a block is determined. This led to users feeling this person was treated unfairly. Several admins chimed in in stating that this kind of mistakes happened, because the process is confusing, especially when one wants the block to end at a certain time and day and not block for a length indicated in the drop-down menu. Additionally confusing is dealing with different time-zones, especially with time saving in summer, they stated. They would very much like the blocking tools to be improved, so that it is easier to determine the endpoint of a block without having to calculate timezones. One helpful option might be, to add a feature that allows a block to be reinstated for the original length (after a user had been unblocked under restrictions e.g.). It looks like several admins of the German community would appreciate it, if this could be looked into. They asked me to bring the issue to the attention of the AHT-team. For more background, see the discussion on de.WP here. --CSteigenberger (WMF) (talk) 12:38, 10 January 2018 (UTC)

- Hi Christel, thank you for bringing this example and suggestions to our discussion! Adding a datetime picker to the block interface has been suggested before, but we've never thought of how timezones can affect this. The software can definitely help with this so mental math isn't required.

- By "allow a block to be reinstated" do you suggest adding an ability to 'pause' the block for a brief period of time? If this is to allow the user to participate in on-wiki discussions, some users have suggested an alternative strategy: add an option to allow the user to also participate on a small list of pages, such as ArbCom or Noticeboards. (This could be an expansion of the "Prevent this user from editing their own talk page while blocked" option, or a new option.) This may prevent the user from feeling jerked around and treated unfairly. Do you or the de.WP community have thoughts on this? — Trevor Bolliger, WMF Product Manager 🗨 19:40, 10 January 2018 (UTC)

- My thoughts without checking back with the community - this would work very well for most cases on de.WP, as we regularily unblock users to give them the chance to take part in a discussion about a request to unblock them. --CSteigenberger (WMF) (talk) 08:49, 11 January 2018 (UTC)

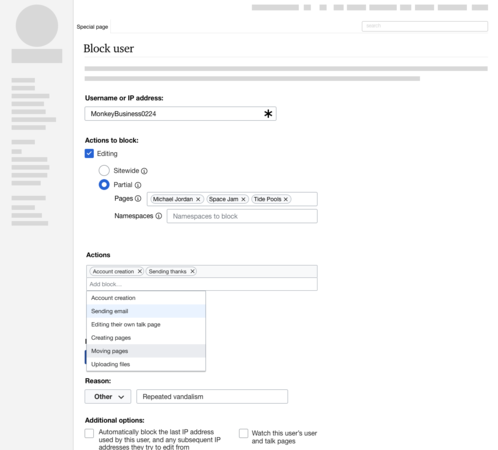

@CSteigenberger (WMF): I have created some potential mockups for this. They are on phab:T132220 and below.

-

We should reuse the existing icons from UploadWizard. The default state would be the pencil, which would provide the same functionality as Special:Block has today

-

On click of the calendar icon, it would change the list dropdown to the dateInputWidget. The block would still be saved the same way, the calculation would happen before 'Block this user' is clicked.

We want to start building this next week. Thoughts? — Trevor Bolliger, WMF Product Manager 🗨 22:24, 22 February 2018 (UTC)

- Sending the question on to the German community now! --CSteigenberger (WMF) (talk) 08:48, 23 February 2018 (UTC)

- I like the idea and I think this will be helpful, however, making a block end on a specific day has been the easier part of the calculation. The difficult part has always been calculating the right hour. Could you include a functionality to also pick a time of day and time zone (with the wiki's and specific day's standard time zone being suggested automatically)? Thanks a lot, → «« Man77 »» [de] 09:40, 23 February 2018 (UTC)

- I'd like to second [de]. The day caused never any problem, but the hour. Especially winter time. --Sargoth (talk) 11:36, 23 February 2018 (UTC)

- There is a DateTimeInputWidget that we could use that would allow you to select/type a calendar date and type in a time. I've added a screenshot to, to the right. If we use this, should the timezone calculation be based on the blocking user's timezone preference, or the blocked user's? — Trevor Bolliger, WMF Product Manager 🗨 17:11, 23 February 2018 (UTC)

- Good question. Maybe admins of wikis where different time zones are much more of an issue can say more about that, in German WP we practically only have to deal with daylight saving time. I suppose it would be helpful already if the times shown in Special:log/block as start and end date, and also the time entered in Special:block refer all to the same time zone. So I guess it's the blocking user's preference that matters more.

- Partial lifts of blocks are probably the even better way to go, but this proposal here still looks like a nice improvement to me. → «« Man77 »» [de] 13:42, 26 February 2018 (UTC)

- There is a DateTimeInputWidget that we could use that would allow you to select/type a calendar date and type in a time. I've added a screenshot to, to the right. If we use this, should the timezone calculation be based on the blocking user's timezone preference, or the blocked user's? — Trevor Bolliger, WMF Product Manager 🗨 17:11, 23 February 2018 (UTC)

- While I think a day/hour-pick-thing would be an improvement, the idea that you could suspend a block is more appealing. --DaB. (talk) 14:20, 23 February 2018 (UTC)

- I agree. Partially lifting a block is more useful and closer to the sense of blocking, than re-installing a previous block. --Holmium (talk) 16:41, 23 February 2018 (UTC)

Block notices on mobile provide insufficient information about the block

[edit]

I've already posted this to phabricator – see phab:T165535 – but I was hoping to get this on the radar as well. On the English Wikipedia, it is policy for administrators, upon blocking a user, to provide them certain information about the block – see w:en:WP:EXPLAINBLOCK. For this reason, virtually all block reasons include a wikilink to relevant policies that explain to the blocked user the issues that were identified in their contributions. For example, if a user is blocked for edit warring, a link is provided in the block log to w:en:Wikipedia:Edit warring. Sometimes, we even use templates in the block reason. A few common ones are w:en:Template:CheckUser block and w:en:Template:School block.